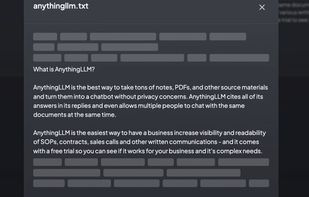

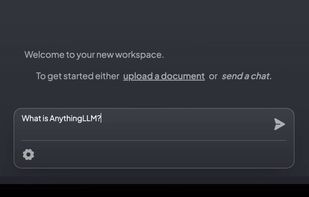

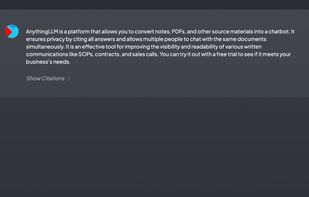

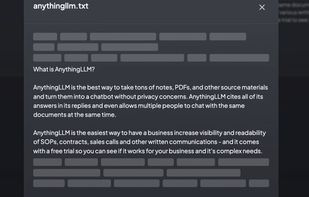

Privacy-focused open-source chatbot enables unlimited document uploads, multi-user support, vector database integration, and intelligent chat from existing files.

Privacy-focused open-source chatbot enables unlimited document uploads, multi-user support, vector database integration, and intelligent chat from existing files.

Open-source, self-hosted note-taking tool featuring AI-driven retrieval, Markdown support, and full data ownership for privacy-focused document control.

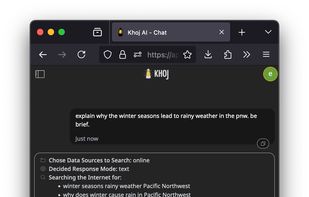

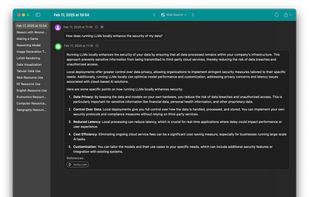

Khoj is an open-source AI second brain that learns from your notes (Obsidian, EMACS), documents, and has access to the internet. It can replace your search engine, help you with reading papers, and get you transparent, fast answers.

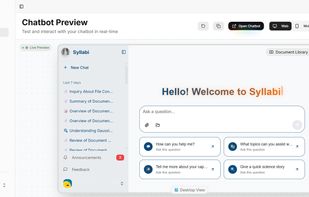

An open-source AI-powered chatbot platform with advanced knowledge base integration, multi-modal support, and seamless integrations.

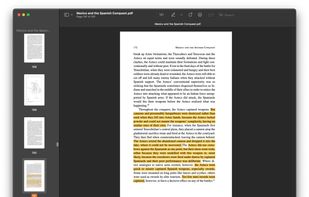

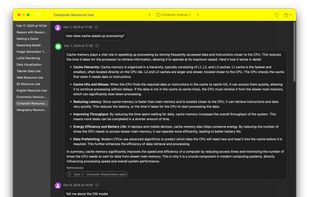

A native macOS app that allows users to chat with a local LLM that can respond with information from files, folders and websites on your Mac without installing any other software.

Run LLMs on AMD Ryzen™ AI NPUs in minutes. Just like Ollama - but purpose-built and deeply optimized for the AMD NPUs.

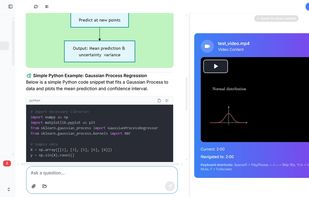

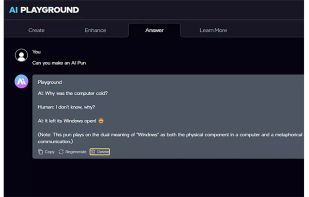

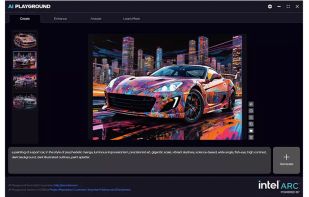

This application provides a full suite of generative AI features for chat, code assistance, document search, image analysis, image and video generation. All features run offline and are powered by your PC’s Intel® Core™ Ultra with built-in Intel Arc GPU or Intel Arc™ dGPU...

A cross-platform Markdown note-taking application dedicated to using AI to bridge recording and writing, organizing fragmented knowledge into a readable note.

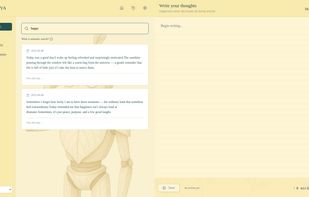

Vinaya Journal is a cross-platform journaling desktop app that integrates local AI via Ollama and a retrieval-augmented generation (RAG) pipeline using ChromaDB. It is designed for offline-first, privacy-conscious AI journaling so that your thoughts stay on your device.

LLM Hub is an open-source Android app for on-device LLM chat and image generation. It's optimized for mobile usage (CPU/GPU/NPU acceleration) and supports multiple model formats so you can run powerful models locally and privately.

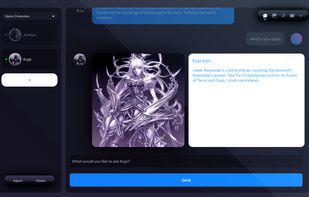

Ragdoll Studio is an open-source, locally hosted web app for creating, interacting with and deploying AI personas. Run models on your own machine with no accounts or API keys required.

A standalone open source app meant for easy use of local LLMs and RAG in particular to interact with documents and files similarly to Nvidia's Chat with RTX.

A polyglot document intelligence framework with a Rust core. Extract text, metadata, and structured information from 75+ formats. Available for Rust, Python, Ruby, Java, Go, PHP, Elixir, C#, TypeScript (Node/Bun/Wasm/Deno)- or use via CLI, REST API, or MCP server.

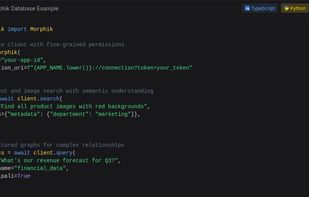

Store, search, and query multi-modal data with fine-grained access control and built-in security. Build AI applications with confidence and speed.

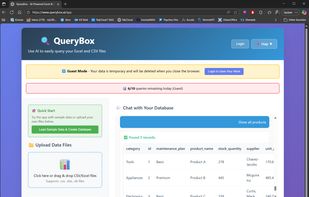

QueryBox transforms CSV, Excel, and PDF files into queryable databases. Ask questions in plain English, get instant answers powered by AI. No SQL knowledge required. Perfect for business owners, analysts, and anyone who needs quick insights from their data.

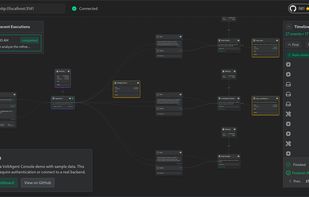

VoltAgent is an open-source TypeScript framework that acts as this essential toolkit. It simplifies the development of AI agent applications by providing modular building blocks, standardized patterns, and abstractions.

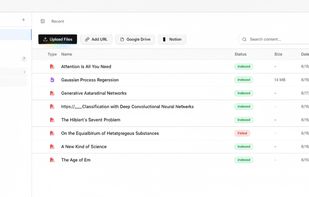

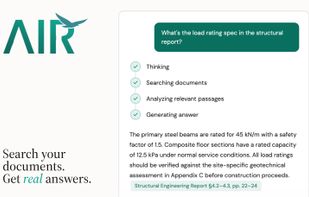

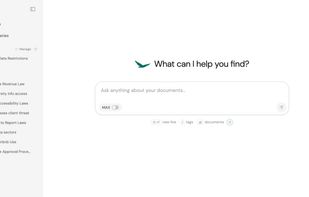

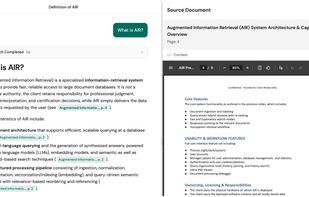

AIR (Augmented Information Retrieval) is a document Q&A tool. Upload your files — PDFs, Word docs, text files — ask questions in plain language, and get answers with citations linked to exact source pages.

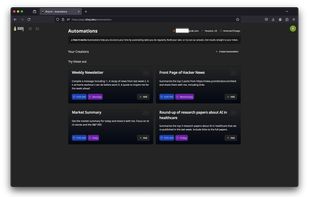

AI-powered chatbot SaaS that uses RAG (Retrieval-Augmented Generation) to answer questions from your own knowledge base. White-label, multi-language, deploys in minutes.