Keywords: Copilot+, DireML, Windows AI APIs (Windows)

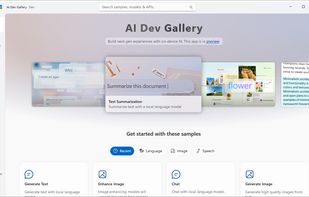

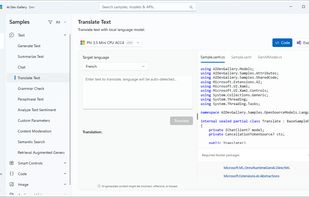

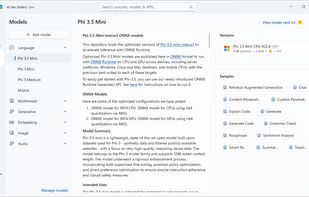

Learn how to add AI with local models and APIs to Windows apps. Discover AI scenarios and models such as Phi, Mistral, Stable Diffusion, Whisper, and many more to delight your users. The AI Dev Gallery is an open-source app designed to help Windows developers integrate AI...

Apple, MediaTek, Qualcomm: https://github.com/MollySophia/rwkv-mobile#supported-or-planned-backends

Keywords: Ryzen AI, XDNA, GAIA, VitisAI

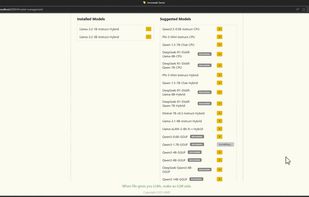

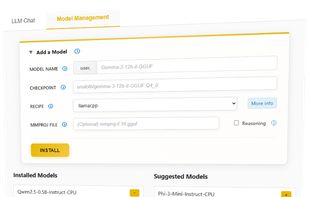

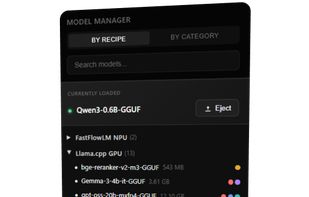

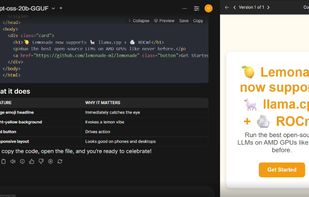

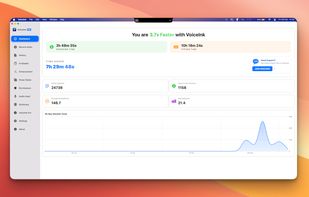

Lemonade helps users discover and run local AI apps by serving optimized LLMs right from their own GPUs and NPUs.

Run LLMs on AMD Ryzen™ AI NPUs in minutes. Just like Ollama - but purpose-built and deeply optimized for the AMD NPUs.

Keywords: Neural Engine (ANE), Core ML

Neural Accelerators introduced in Apple10 architecture (A19 / M5) is related, but strictly speaking it's part of GPU, and it's called with MLX or Metal/MPS

https://engineering.drawthings.ai/p/integrating-metal-flashattention-accelerating-the-heart-of-image-generation-in-the-apple-ecosystem-16a86142eb18 https://docs.drawthings.ai/documentation/documentation/7.coreml

Also support latest tech like Metal FlashAttention 2.5 w/ Neural Accelerators: https://releases.drawthings.ai/p/metal-flashattention-v25-w-neural

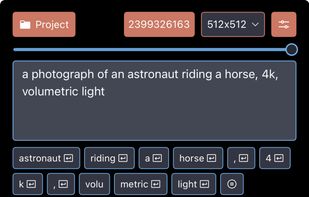

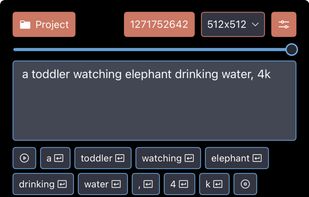

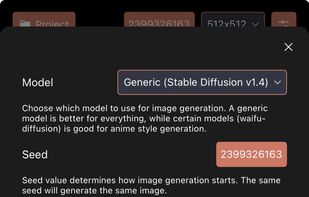

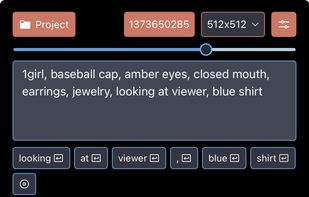

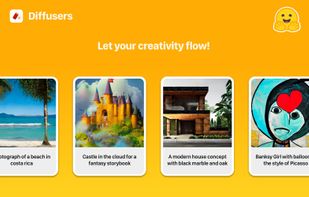

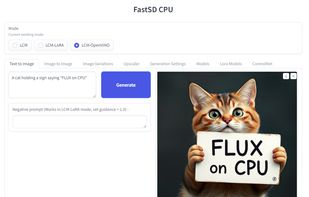

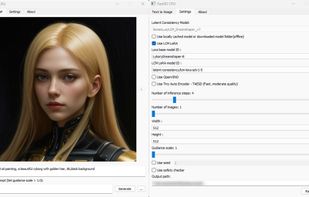

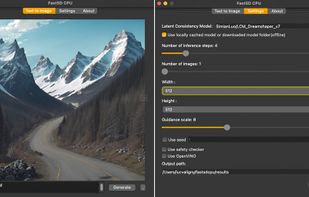

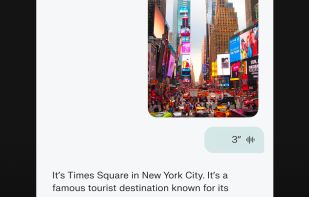

Generate images from text using Stable Diffusion 1.5. Simply describe the image you desire, and the app will generate it for you like magic!

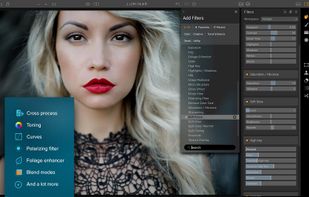

Diffusers is a native Mac app to generate images from a text description of what you want. It uses state-of-the-art models contributed by the community to the Hugging Face Hub, optimized and converted to Core ML for maximum performance.

This app uses Apple's Core ML Stable Diffusion implementation to achieve maximum performance and speed on Apple Silicon based Macs while reducing memory requirements.

A Stable Diffusion app for macOS built with SwiftUI and Apple's ml-stable-diffusion CoreML models.

Keywords: Core Ultra, Intel AI boost, OpenVINO

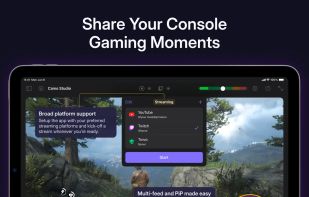

This application provides a full suite of generative AI features for chat, code assistance, document search, image analysis, image and video generation. All features run offline and are powered by your PC’s Intel® Core™ Ultra with built-in Intel Arc GPU or Intel Arc™ dGPU...

Keywords: Hexagon, Qualcomm AI Engine Direct (QNN)

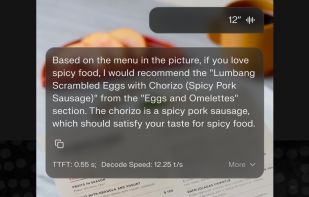

Run Stable Diffusion on Android Devices with Snapdragon NPU acceleration. Also supports CPU/GPU inference.

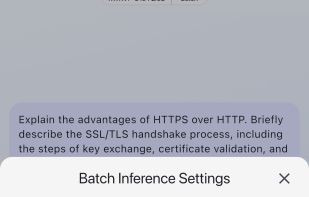

LLM Hub is an open-source Android app for on-device LLM chat and image generation. It's optimized for mobile usage (CPU/GPU/NPU acceleration) and supports multiple model formats so you can run powerful models locally and privately.

Focusing on NPUs integrated in SoCs, yet not including discrete NPUs like Google TPU, Huawei Ascend NPU, etc.