Inferencer

Inferencer lets you run, host and deeply control the latest SOTA AI models (OSS, DeepSeek, Qwen, Kimi, GLM, MiniMax and more) from your own computer.

Cost / License

- Freemium (Subscription)

- Proprietary

Application types

Platforms

- Mac

- iPhone

- iPad

Features

Properties

- Privacy focused

- Lightweight

Features

- Syntax Highlighting

- Support for MarkDown

- No registration required

- Dark Mode

- Works Offline

- No Coding Required

- Ad-free

- No Tracking

- AI Writing

- Distributed Computing

- AI Chatbot

- AI-Powered

Inferencer News & Activities

Recent activities

- vtudio updated Inferencer

- vtudio updated Inferencer

Zack_indie added Inferencer as alternative to OpenClaw Launch

Zack_indie added Inferencer as alternative to OpenClaw Launch POX added Inferencer as alternative to MiroThinker

POX added Inferencer as alternative to MiroThinker- vtudio updated Inferencer

- bnchndlr liked Inferencer

- vtudio added Inferencer as alternative to Claude, Microsoft Copilot and Kimi

vtudio added Inferencer as alternative to Google Gemini and Grok

vtudio added Inferencer as alternative to Google Gemini and Grok- vtudio added Inferencer as alternative to Perplexity

POX added Inferencer as alternative to Alice AI Assistant

POX added Inferencer as alternative to Alice AI Assistant

Inferencer information

What is Inferencer?

Inferencer lets you run, host and deeply control the latest SOTA AI models (OSS, DeepSeek, Qwen, Kimi, GLM, MiniMax and more) from your own computer.

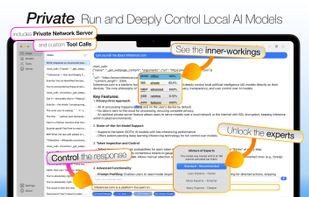

No data is sent to the cloud for processing - maintaining your complete privacy. Advanced inferencing controls give you complete control on their accuracy and outputs.

Models Start in the models section where you can download the latest models directly from Hugging Face. Use the distributed compute feature to load a model across two Macs, or use the model streaming feature to inference larger models partially from storage.

Server Use the server feature to host and connect to an Inferencer running on your Mac to run even larger models over the network.

Chats Select the model to interact with on the top menu bar and write a prompt to begin. At any point you can switch between models and continue the chat to see what else they can uncover.

Chat Controls Control the inferencing parameters including batching to inference multiple chats at the same time, intensity of processing, and model streaming to load models larger than available memory.

Token Entropy, Inspection and Control Select the inspectors to peek into the inner-workings of each word outputted and see the model's confidence levels and alternative choices. Utilise the control response feature to control the output the model generates. For example, skipping the preamble or directing the model to output in structured html.

Tools and Agents Support for custom tool calls, shortcuts and persistent prompt caching which speeds up agent prompt processing by 99x on cache hits.

Settings Includes parental controls, an automatic deletion policy and more.

Comments and Reviews

I've been using this for sometime now and I find that the features are quite useful - the probability token and the ability to change course of the AI.