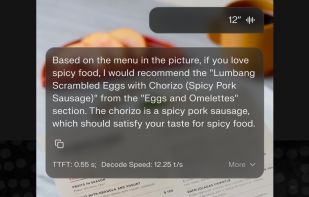

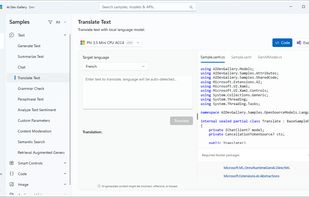

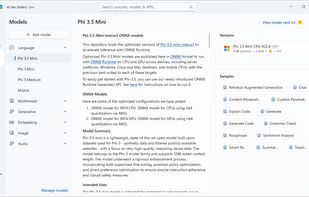

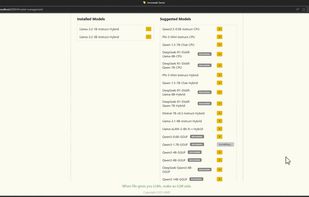

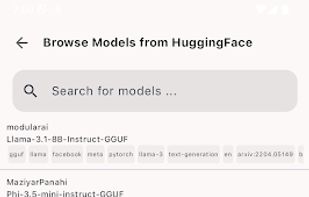

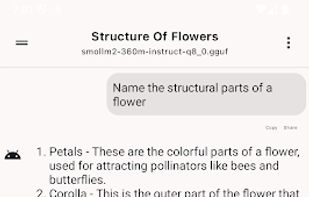

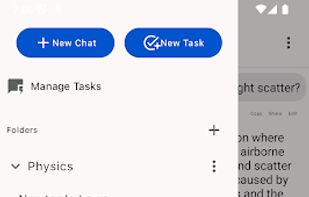

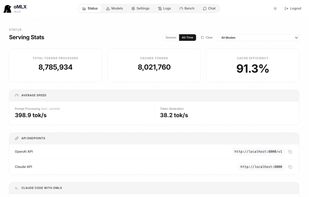

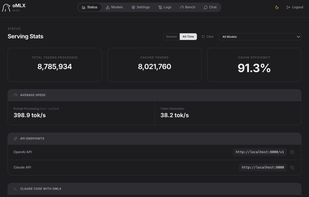

Private offline on-device local LLM app by Ente, open source code, no accounts or tracking, and works cross-platform on mobile and desktop devices.

Cost / License

- Free

- Open Source (AGPL-3.0)

Application types

Platforms

- Mac

- Windows

- Linux

- Android

- iPhone

- Android Tablet

- iPad