Run AI models locally on your device. Foundry Local provides on-device inference with complete data privacy, no Azure subscription required.

Cost / License

- Free

- Open Source

Platforms

- Windows

- Mac

LM Studio is described as 'Discover, download, and run local LLMs' and is a large language model (llm) tool in the ai tools & services category. There are more than 50 alternatives to LM Studio for a variety of platforms, including Mac, Windows, Linux, Android and Self-Hosted apps. The best LM Studio alternative is Ollama, which is both free and Open Source. Other great apps like LM Studio are Jan.ai, GPT4ALL, Open WebUI and AnythingLLM.

Run AI models locally on your device. Foundry Local provides on-device inference with complete data privacy, no Azure subscription required.

Alice is a smart desktop AI assistant application built with Vue.js, Vite, and Electron. Advanced memory system, function calling, MCP support, optional fully local use, and more.

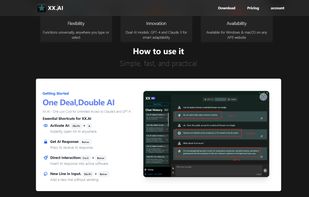

XX.AI offers a simple and cost-effective solution for unlimited access to GPT-4, Claude 3, and DALL-E 3. With essential shortcuts like Shift + A for instant activation, you can harness the power of AI in any writing or content creation task.

OfflineGPT is a fully offline AI app for Android that runs large language models, image generation, and voice input directly on your device. No internet connection, no cloud, no data ever leaves your phone.

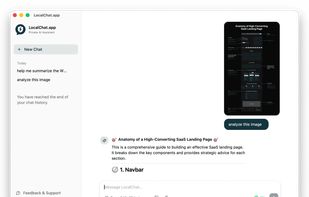

LocalChat is a private AI chat application that runs entirely on your Mac. No cloud servers, no subscriptions, no account required. Just fast, local AI conversations powered by your Apple Silicon hardware.

QVAC converts local AI into a high-quality experience that sits in your pocket. All the value you’re used to seeing in other AI assistants, minus the lack of privacy.

Privacy-focused AI chat assistant compatible with Windows, Mac, and Linux. Operates completely offline or with EU-hosted models, never uploads data, keeps chats secure, supports multiple tabs, prompt libraries, organized conversations, and flexible usage-based pricing.

Ekorbia is a native desktop Integrated Chat and Productivity Environment for local AI models powered by Ollama. Features include chat history, full-text search, prompt library, model comparison chat mode, chat overlay, ephemeral chat, file watches and more.

BlackCortex Lite is a free, self-hosted AI chat application that runs entirely on your machine. No cloud, no subscriptions, no data leaving your network. Built for home use — launch the exe, open a browser, and everyone on your LAN can use it immediately.

vMLX provides functions no other MLX inferencing app does, including LM Studio, from KV Cache Quantization (save 2-4x the RAM), Prefix Caching, and full VL support.