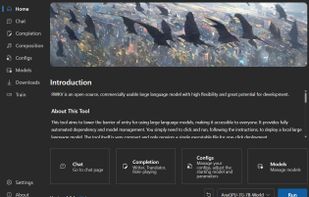

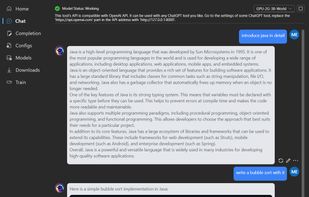

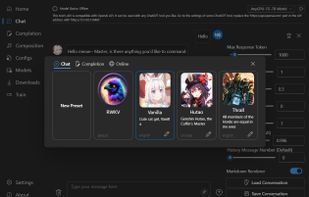

LM Studio is described as 'Discover, download, and run local LLMs' and is a large language model (llm) tool in the ai tools & services category. There are more than 50 alternatives to LM Studio for a variety of platforms, including Mac, Windows, Linux, Android and Self-Hosted apps. The best LM Studio alternative is Ollama, which is both free and Open Source. Other great apps like LM Studio are Jan.ai, GPT4ALL, Open WebUI and AnythingLLM.

LM Studio alternatives are mainly Large Language Model (LLM) Tools, but if you're looking for

AI Chatbots or AI Writing Tools you can filter on that. Other popular filters include

Open Source and

Windows + Open Source. These are just examples - use the filter bar below to find more specific alternatives to LM Studio.