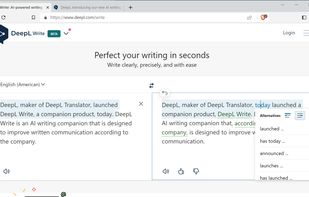

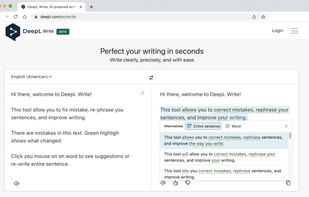

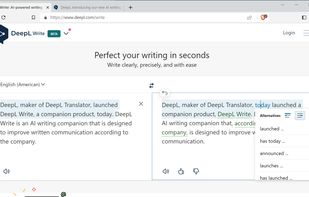

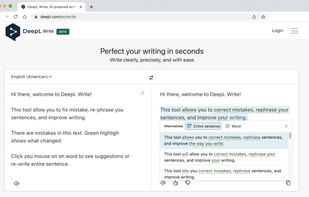

An AI tool enhancing English and German communication by offering robust suggestions on phrasing, tone, style, and grammar, ideal for professionals, multilingual teams, and varied proficiency users.

Follamac is described as 'You need Ollama running on your localhost with some model. Once Ollama is running the model can be pulled from Follamac or from command line. From command line type something like: Ollama pull llama3 If you wish to pull from Follamac you can write llama3 into "Model name to' and is a AI Chatbot in the ai tools & services category. There are more than 50 alternatives to Follamac for a variety of platforms, including Mac, Web-based, Windows, Linux and iPhone apps. The best Follamac alternative is ChatGPT, which is free. Other great apps like Follamac are Ollama, Perplexity, Google Gemini and Claude.

An AI tool enhancing English and German communication by offering robust suggestions on phrasing, tone, style, and grammar, ideal for professionals, multilingual teams, and varied proficiency users.

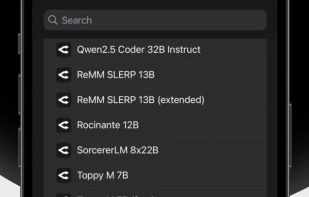

Open-source AI platform for deploying language models across desktop and mobile systems, features model selection, on-premises setup, enterprise-level encryption, advanced security auditing, and flexibility for organizations seeking total data ownership and compliance.

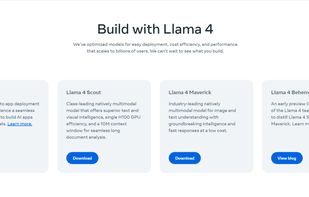

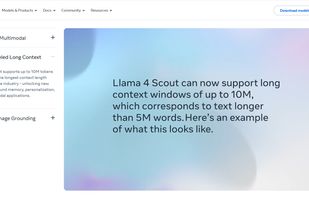

As part of Meta’s commitment to open science, today we are publicly releasing Llama (Large Language Model Meta AI), a state-of-the-art foundational large language model designed to help researchers advance their work in this subfield of AI.

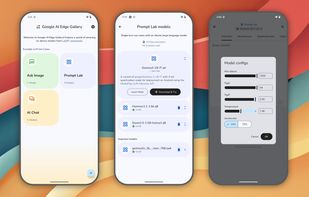

A gallery that showcases on-device ML/GenAI use cases and allows people to try and use models locally.

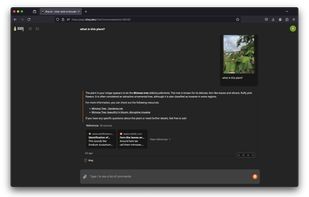

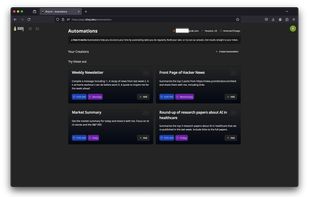

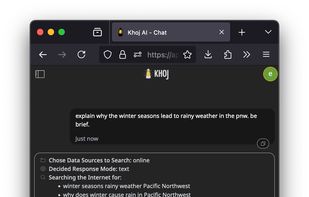

Khoj is an open-source AI second brain that learns from your notes (Obsidian, EMACS), documents, and has access to the internet. It can replace your search engine, help you with reading papers, and get you transparent, fast answers.

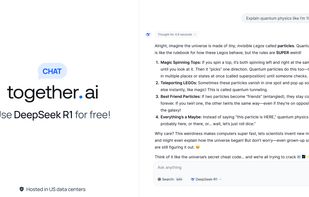

Together Chat is a next-generation consumer app designed to let you interact seamlessly with today's most popular open-source models, including free access to DeepSeek R1, securely hosted in the North America.

Leo is an AI-powered intelligent assistant, built right into the browser. Leo can answer questions, help accomplish tasks, and more.

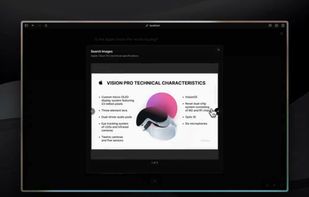

Morphic it's a fully open-source AI-powered answer engine with a generative UI. Built with Vercel AI SDK, it delivers awesome streaming results.

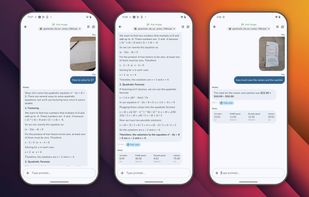

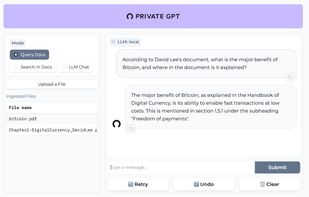

Ask questions to your documents without an internet connection, using the power of LLMs. 100% private, no data leaves your execution environment at any point. You can ingest documents and ask questions without an internet connection!

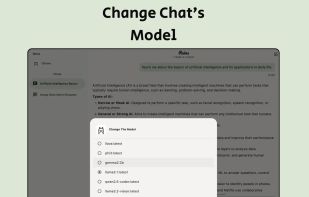

Customizable interface for private, on-device AI model chatting, open source and closed source LLM connections, OpenRouter support, custom backend support, and unified access to language models without reliance on a single provider, ensuring privacy and flexibility.

LlamaGPT is a chatbot that provides a ChatGPT-like experience, with no data leaving your device.

Empowering LLM researchers and hobbyists with seamless control over self-hosted models. Connect remotely, customize prompts, manage chats, and fine-tune configurations. All in one intuitive app.