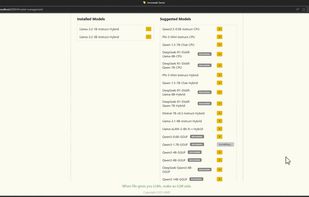

AI Playground is described as 'This application provides a full suite of generative AI features for chat, code assistance, document search, image analysis, image and video generation. All features run offline and are powered by your PC’s Intel® Core Ultra with built-in Intel Arc GPU or Intel Arc dGPU' and is a large language model (llm) tool in the ai tools & services category. There are more than 10 alternatives to AI Playground for a variety of platforms, including Windows, Mac, Linux, Android and iPhone apps. The best AI Playground alternative is Ollama, which is both free and Open Source. Other great apps like AI Playground are AnythingLLM, A1111 Stable Diffusion WEB UI, Comfy and InvokeAI.

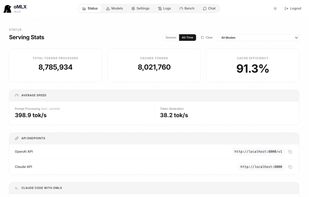

AI00 RWKV Server is an inference API server for the RWKV language model based upon the web-rwkv inference engine.

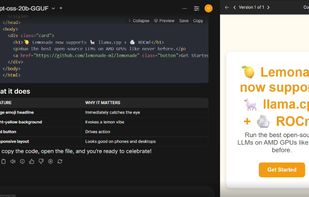

Lemonade helps users discover and run local AI apps by serving optimized LLMs right from their own GPUs and NPUs.

A fast AI Video Generator for the GPU Poor. Supports Wan 2.1/2.2, Qwen Image, Hunyuan Video, LTX Video and Flux.

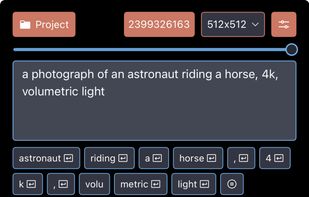

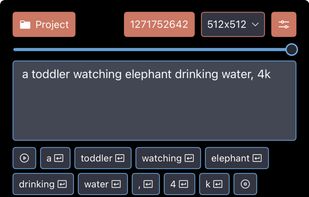

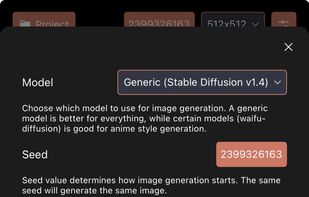

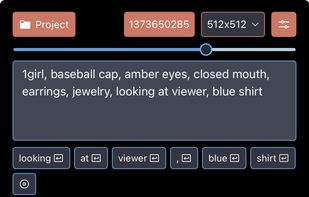

Draw Things provides a comprehensive but still easy-to-use, mobile and desktop solution for AI-based art generation. It packs all the power of Stable Diffusion into a sleek, iOS and Mac app that lets you create, upscale and edit AI art, totally offline, free and privacy safe.

SwarmUI (formerly StableSwarmUI), A Modular Stable Diffusion Web-User-Interface, with an emphasis on making powertools easily accessible, high performance, and extensibility.

LLM inference server with continuous batching & SSD caching for Apple Silicon — managed from the macOS menu bar.

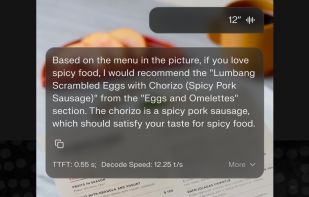

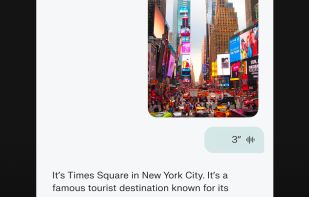

📱 The first fully functional, standalone AI assistant for mobile devices with powerful tool-calling capabilities 📱

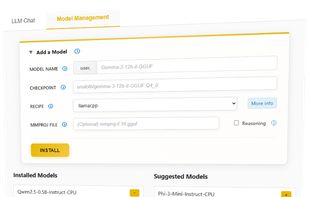

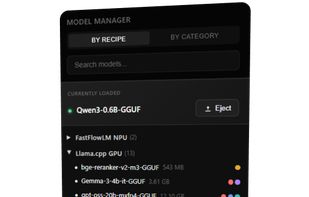

Run LLMs on AMD Ryzen™ AI NPUs in minutes. Just like Ollama - but purpose-built and deeply optimized for the AMD NPUs.

LLM Hub is an open-source Android app for on-device LLM chat and image generation. It's optimized for mobile usage (CPU/GPU/NPU acceleration) and supports multiple model formats so you can run powerful models locally and privately.

The main goal of llama.cpp is to enable LLM inference with minimal setup and state-of-the-art performance on a wide range of hardware - locally and in the cloud.