oMLX

LLM inference server with continuous batching & SSD caching for Apple Silicon — managed from the macOS menu bar.

Cost / License

- Free

- Open Source (Apache-2.0)

Platforms

- Mac

Features

Properties

- Distraction-free

- Lightweight

Features

- Apple Silicon support

oMLX News & Activities

Recent activities

- justarandom added oMLX

- justarandom added oMLX as alternative to DeepSeek, Jan.ai, AnythingLLM and Alpaca - Ollama Client

oMLX information

What is oMLX?

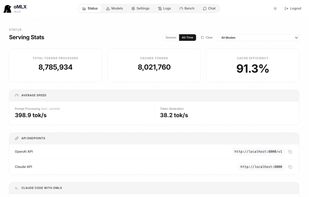

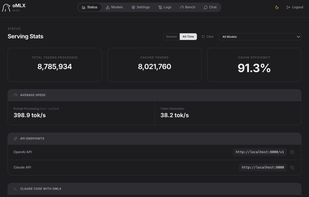

Built for the way agents actually work.

Coding agents invalidate the KV cache dozens of times per session. oMLX persists every cache block to SSD — so when the agent circles back to a previous prefix, it's restored from disk in milliseconds, not recomputed from scratch. 01 — CORE Paged SSD KV caching Cache blocks are persisted to disk in safetensors format. Two-tier architecture: hot blocks stay in RAM, cold blocks go to SSD with LRU policy. Previously seen prefixes are restored across requests and server restarts — never recomputed. 02 — THROUGHPUT Continuous batching Handles concurrent requests through mlx-lm's BatchGenerator. Up to 4.14× generation speedup at 8× concurrency. No more queuing behind a single request. 03 — APP Native macOS menu bar app Start, stop, and monitor the server from your menu bar. Web dashboard for model management, chat, and real-time metrics. Signed, notarized, with in-app auto-update. Not Electron. 04 — MODELS Multi-model serving LLM, VLM, embedding, and reranker models loaded simultaneously. LRU eviction when memory runs low. Browse and download models directly from the admin dashboard. 05 — API OpenAI + Anthropic drop-in Compatible with Claude Code, OpenClaw, Cursor, and any OpenAI-compatible client. Native /v1/messages Anthropic endpoint. Web dashboard generates the exact config command for each tool. 06 — TOOLS Tool calling + MCP Supports all major tool calling formats: JSON, Qwen, Gemma, GLM, MiniMax. MCP tool integration and tool result trimming for oversized outputs. Configurable per model.