Run Llama, Gemma, Qwen, DeepSeek, and more locally on your iPhone, iPad, and Mac. Offline. Private. No login. Optimized for Apple Silicon.

Off Grid Mobile is described as 'The Swiss Army Knife of offline AI. Chat, speak, and generate images. Privacy first, zero internet. Download an LLM and use it on your mobile device. No data ever leaves your phone' and is an app. There are more than 50 alternatives to Off Grid Mobile for a variety of platforms, including Mac, Windows, Web-based, iPhone and Android apps. The best Off Grid Mobile alternative is Ollama, which is both free and Open Source. Other great apps like Off Grid Mobile are Jan.ai, Claude, GPT4ALL and Microsoft Copilot.

Run Llama, Gemma, Qwen, DeepSeek, and more locally on your iPhone, iPad, and Mac. Offline. Private. No login. Optimized for Apple Silicon.

Experience the power of RWKV models directly on your device. Completely offline, privacy-first, and efficient. No internet required.

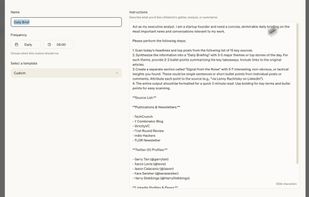

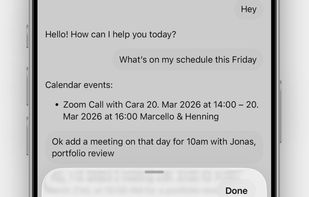

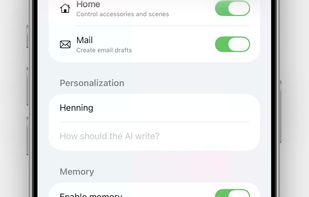

Personal assistant with desktop context lets you recall Mac files, notes, and conversations on your iPhone, resume chats seamlessly, and enjoy synced access anywhere. No new phone data is collected—only Mac-based context is accessed for continuity.

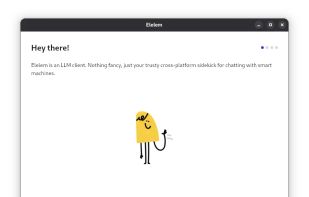

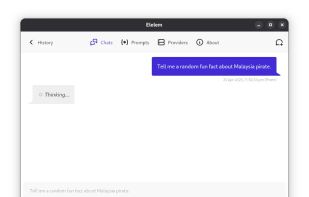

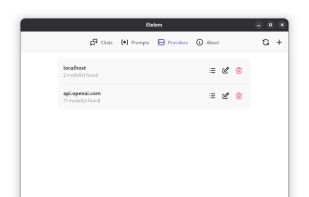

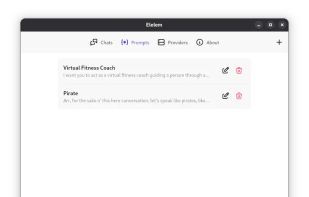

Elelem is a versatile LLM client that connects seamlessly with OpenAI API compatible services. Effortlessly create and switch between multiple custom prompts to match your needs. Open source and cross-platform, Elelem puts control in your hands with your own API key; without it...

Connect your local or hosted instance using your URL and API key. Once connected, all available models are loaded automatically. You can switch between them at any time, start new chats, or continue existing ones in a clean and focused interface.

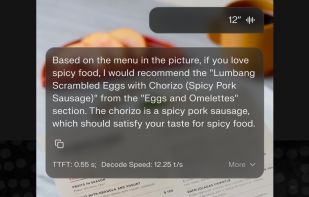

Experience the power of AI at your fingertips with Private LLM, AI chatbot that runs on your iPhone, iPad and Mac without needing an internet connection.

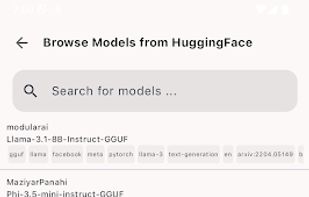

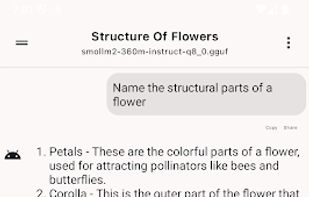

SmolChat allows you to download and run popular LLMs on your Android device, locally, without needing an internet connection. Customize the model used for each chat, tune settings like temperature and min-p, and pin your favourite chats on the home-screen with shortcuts.

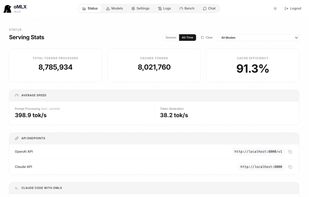

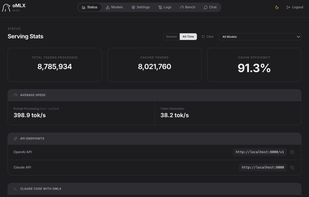

LLM inference server with continuous batching & SSD caching for Apple Silicon — managed from the macOS menu bar.

📱 The first fully functional, standalone AI assistant for mobile devices with powerful tool-calling capabilities 📱

LLM Hub is an open-source Android app for on-device LLM chat and image generation. It's optimized for mobile usage (CPU/GPU/NPU acceleration) and supports multiple model formats so you can run powerful models locally and privately.