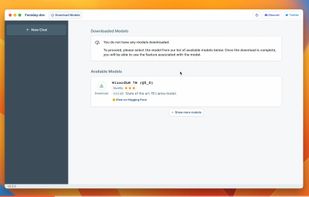

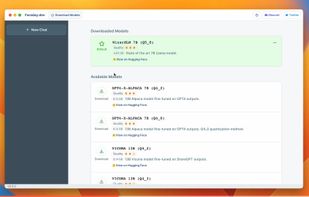

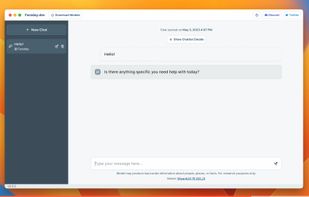

Msty AI is described as 'The simplest way to use local and online AI models. Interact with any AI model with just a click of a button' and is a AI Chatbot in the ai tools & services category. There are more than 25 alternatives to Msty AI for a variety of platforms, including Mac, Windows, Linux, Web-based and iPhone apps. The best Msty AI alternative is Perplexity, which is free. Other great apps like Msty AI are Jan.ai, GPT4ALL, Open WebUI and Microsoft Copilot.

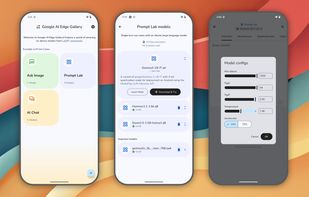

A gallery that showcases on-device ML/GenAI use cases and allows people to try and use models locally.

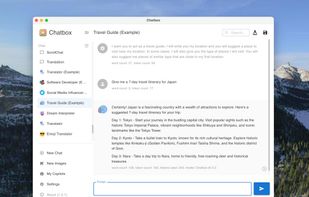

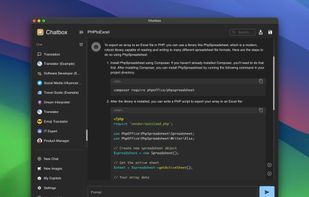

Unleash the power of artificial intelligence right at your fingertips with Chatbox, the versatile client application seamlessly integrated across all major platforms – Windows, Mac, Linux, Web, and iOS.

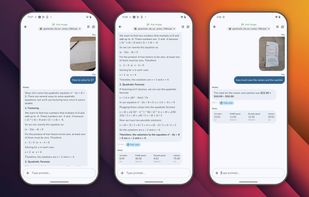

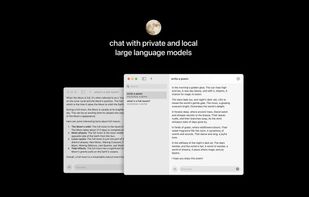

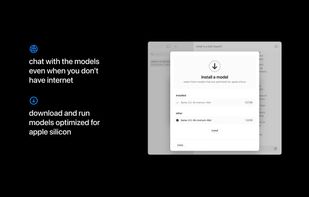

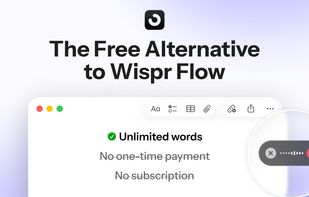

The simplest way to use private LLMs: Works fully offline and private when you don’t have internet. Runs models on-device optimized for Apple silicon.

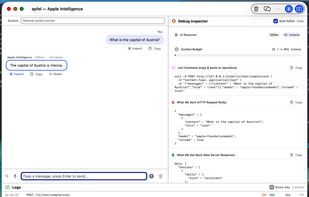

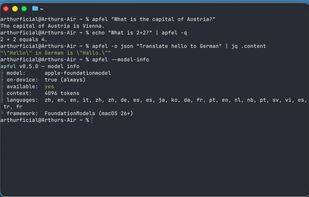

Access Apple’s local foundation model through a command-line tool with an OpenAI-compatible HTTP server, full function and schema calling, UNIX-friendly features, file attachments, JSON output, privacy-preserving on-device inference, and zero recurring costs.

Onit AI Copilot offers a comprehensive desktop AI chat assistant with local processing and multi-provider support. It prioritizes customization, extensibility, and user privacy by operating entirely on-device without needing an internet connection, ensuring data security.

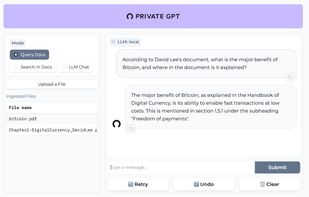

Ask questions to your documents without an internet connection, using the power of LLMs. 100% private, no data leaves your execution environment at any point. You can ingest documents and ask questions without an internet connection!

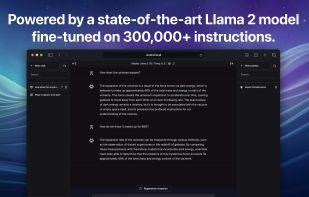

LlamaGPT is a chatbot that provides a ChatGPT-like experience, with no data leaving your device.

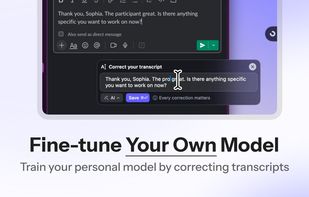

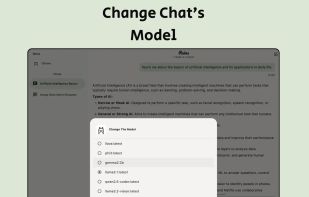

Empowering LLM researchers and hobbyists with seamless control over self-hosted models. Connect remotely, customize prompts, manage chats, and fine-tune configurations. All in one intuitive app.

Run open-source LLMs on your computer. You can download and make custom characters for your models. Works offline. The new name is Back yard though it is the same.