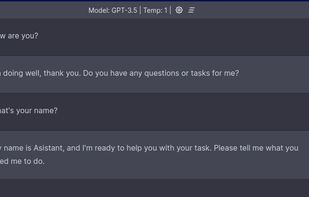

Empowering LLM researchers and hobbyists with seamless control over self-hosted models. Connect remotely, customize prompts, manage chats, and fine-tune configurations. All in one intuitive app.

MLC LLM is described as 'Machine learning compiler and high-performance deployment engine for large language models. The mission of this project is to enable everyone to develop, optimize, and deploy AI models natively on everyone’s platforms' and is a AI Chatbot in the ai tools & services category. There are more than 50 alternatives to MLC LLM for a variety of platforms, including Mac, Windows, iPhone, Android and iPad apps. The best MLC LLM alternative is ChatGPT, which is free. Other great apps like MLC LLM are Lumo by Proton, Ollama, Google Gemini and GPT4ALL.

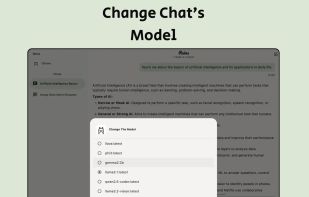

Empowering LLM researchers and hobbyists with seamless control over self-hosted models. Connect remotely, customize prompts, manage chats, and fine-tune configurations. All in one intuitive app.

Complete LLM chatbot implementation, offering tokenization, pretraining, finetuning, evaluation, inference, and a simple web UI. Runs on a single 8xH100 node, features a hackable codebase, dependency-lite design, and automates the full workflow via scripts.

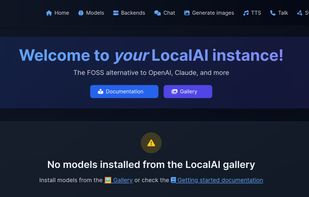

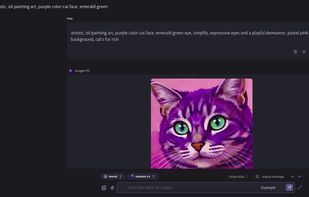

Drop-In OpenAI replacement, On-device, local-first, Generate text/image/speech/music/etc... Backend Agnostic: (llama.cpp, diffusers, bark.cpp, etc...), Optional Distributed Inference(P2P/Federated).

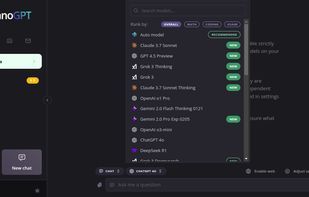

NanoGPT is revolutionizing AI access with a core mission: democratizing state-of-the-art models like ChatGPT, Claude, and more for everyone, globally. We believe cutting-edge AI should be accessible, not expensive or complex.

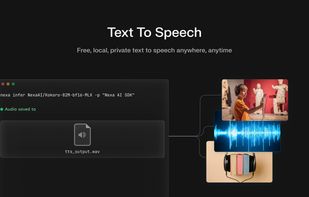

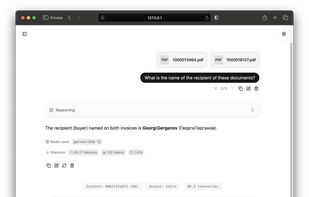

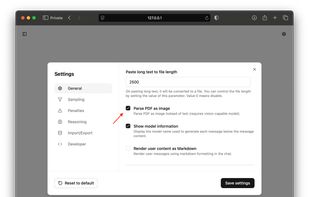

Chat with AI models, generate images, convert books into audiobooks, and run text-to-speech – all locally on your device. No cloud. No compromises.

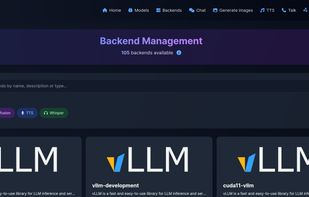

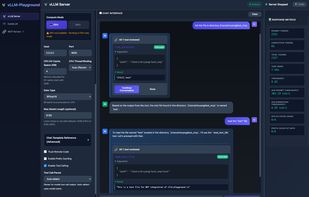

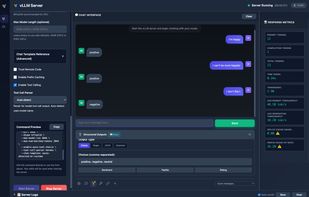

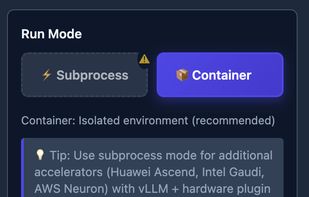

A modern web interface for managing and interacting with vLLM servers (www.github.com/vllm-project/vllm). Supports both GPU and CPU modes, with special optimizations for macOS Apple Silicon and enterprise deployment on OpenShift/Kubernetes.

Run frontier LLMs and VLMs with day-0 model support across GPU, NPU, and CPU, with comprehensive runtime coverage for PC (Python/C++), mobile (Android & iOS), and Linux/IoT (Arm64 & x86 Docker). Supporting OpenAI GPT-OSS, IBM Granite-4, Qwen-3-VL, Gemma-3n, Ministral-3, and more.

Privacy-first AI runs on-device for iPhone and iPad, manages files, calendar, contacts, and health offline, analyzes PDFs, enables secure chats, custom briefings, and supports 13 languages, with optional cloud models using your OpenAI API key for flexibility.

The main goal of llama.cpp is to enable LLM inference with minimal setup and state-of-the-art performance on a wide range of hardware - locally and in the cloud.

Run LLMs on AMD Ryzen™ AI NPUs in minutes. Just like Ollama - but purpose-built and deeply optimized for the AMD NPUs.

Comprehensive AI suite for Galaxy devices includes photo, video, and audio editing, generative and side-by-side tools, background noise removal, writing and note-taking assistance, AI-powered briefings, real-time info, content organization, and seamless device integration.

OfflineLLM is unlimited, private, offline, 24/7, free access to AI. Augment your day-to-day life by using this Ai chatbot for a multiplicity of applications.