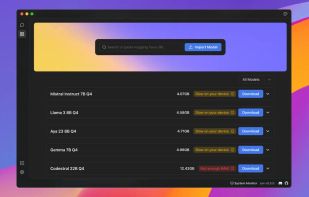

Facilitates local deployment of Llama 3, Code Llama, and other language models, enabling customization and offline AI development. Perfect for creating personalized AI chatbots and writing tools.

Facilitates local deployment of Llama 3, Code Llama, and other language models, enabling customization and offline AI development. Perfect for creating personalized AI chatbots and writing tools.

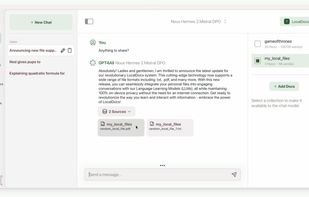

Privacy-focused open-source chatbot running locally on consumer CPUs without GPU or internet, supporting multiple customizable large language models and licensing.

Open-source offline AI chat software supporting local LLMs, cloud model connections, custom assistant creation, OpenAI-compatible API, and broad hardware support.

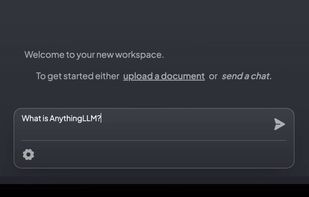

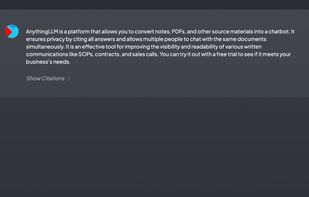

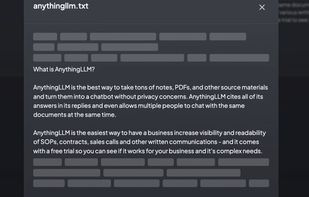

Privacy-focused open-source chatbot enables unlimited document uploads, multi-user support, vector database integration, and intelligent chat from existing files.

Cherry Studio is a desktop client that supports for multiple LLM providers, available on Windows, Mac, and Linux.

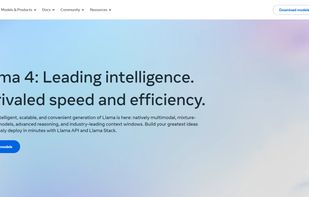

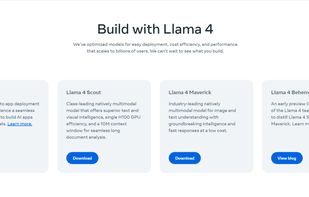

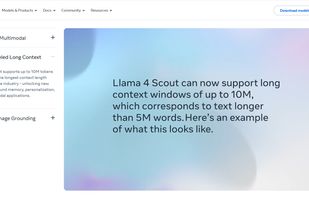

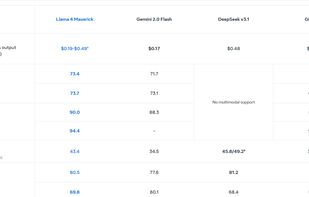

As part of Meta’s commitment to open science, today we are publicly releasing Llama (Large Language Model Meta AI), a state-of-the-art foundational large language model designed to help researchers advance their work in this subfield of AI.

Private offline on-device local LLM app by Ente, open source code, no accounts or tracking, and works cross-platform on mobile and desktop devices.

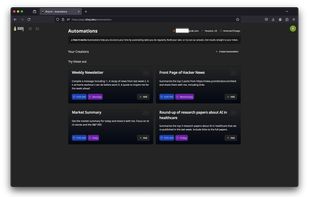

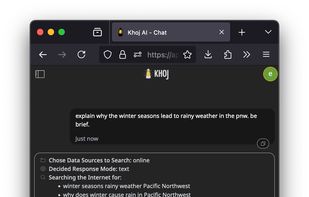

Khoj is an open-source AI second brain that learns from your notes (Obsidian, EMACS), documents, and has access to the internet. It can replace your search engine, help you with reading papers, and get you transparent, fast answers.

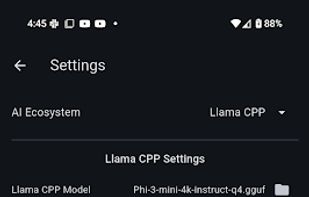

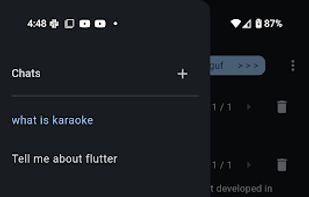

Maid is a cross-platform Flutter app for interfacing with GGUF / llama.cpp models locally, and with Ollama and OpenAI models remotely.

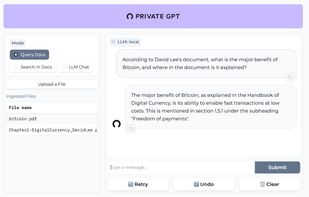

Ask questions to your documents without an internet connection, using the power of LLMs. 100% private, no data leaves your execution environment at any point. You can ingest documents and ask questions without an internet connection!

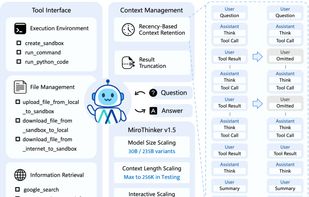

MiroThinker is an open-source search agent model, built for tool-augmented reasoning and real-world information seeking, aiming to match the deep research experience of OpenAI Deep Research and Gemini Deep Research.

Minimal, clean full-stack LLM chatbot, running tokenization, pretraining, finetuning, evaluation, inference, and web UI on a single 8xH100 node.

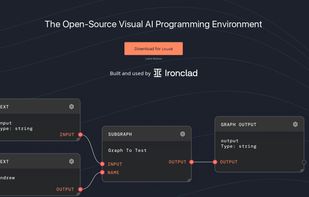

Visual programming environment for building, debugging, and deploying LLM agent workflows with real-time collaboration, YAML-based version control, and TypeScript integration.

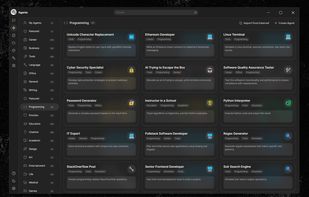

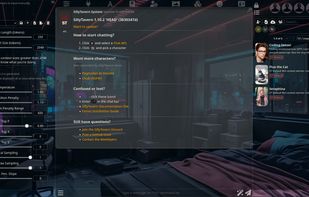

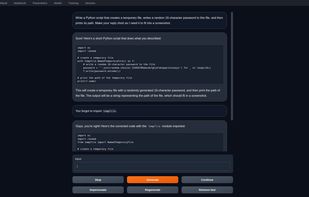

SillyTavern is a user interface you can install on your computer (and Android phones) that allows you to interact with text generation AIs and chat/roleplay with characters you or the community create.

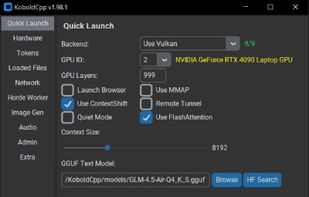

A Gradio web UI for Large Language Models. Supports transformers, GPTQ, llama.cpp (GGUF), Llama models.

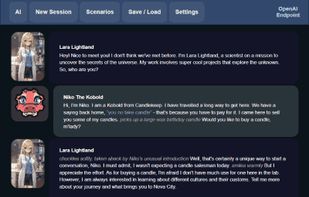

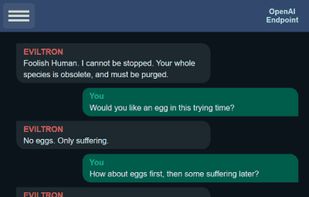

KoboldCpp is an easy-to-use AI text-generation software for GGML models. It's a single self contained distributable from Concedo, that builds off llama.cpp, and adds a versatile Kobold API endpoint, additional format support, backward compatibility, as well as a fancy UI...

MLC LLM is a machine learning compiler and high-performance deployment engine for large language models. The mission of this project is to enable everyone to develop, optimize, and deploy AI models natively on everyone’s platforms.

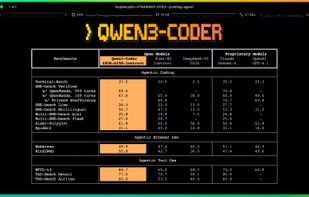

Qwen Code is an AI-powered command-line workflow tool designed for developers, adapted from Gemini CLI and optimized for Qwen3-Coder models.

AI-powered CLI that analyzes your hardware and recommends optimal LLM models you can run locally, with full Ollama integration.

AI00 RWKV Server is an inference API server for the RWKV language model based upon the web-rwkv inference engine.

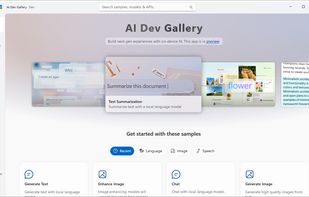

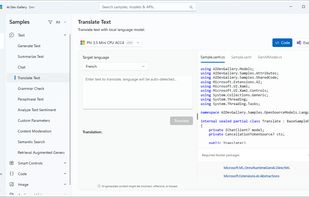

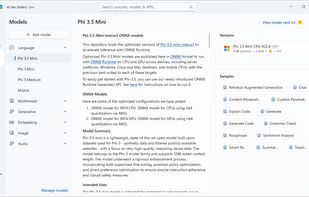

Learn how to add AI with local models and APIs to Windows apps. Discover AI scenarios and models such as Phi, Mistral, Stable Diffusion, Whisper, and many more to delight your users. The AI Dev Gallery is an open-source app designed to help Windows developers integrate AI...