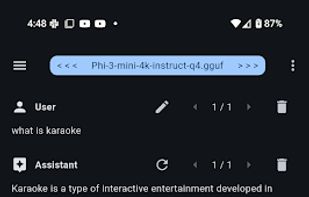

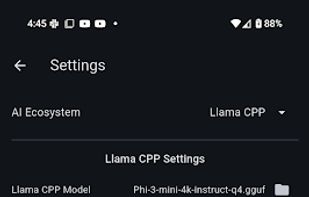

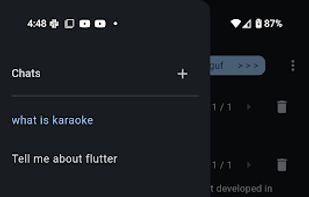

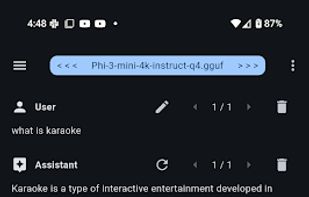

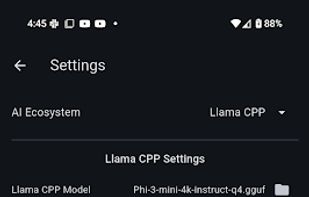

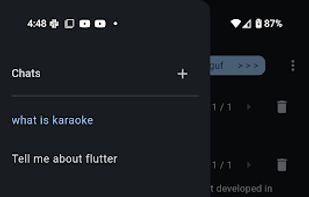

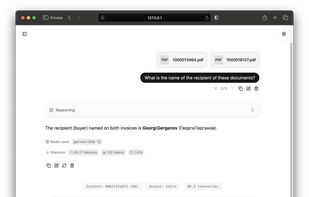

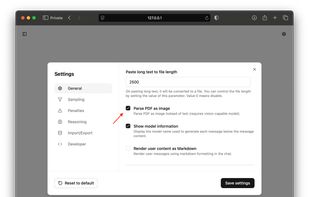

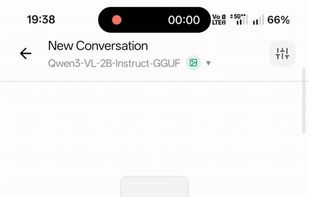

Maid is a cross-platform Flutter app for interfacing with GGUF / llama.cpp models locally, and with Ollama and OpenAI models remotely.

Cost / License

- Free

- Open Source (MIT)

Application types

Platforms

- Mac

- Windows

- Linux

- Android

- Android Tablet

- F-Droid

Maid is a cross-platform Flutter app for interfacing with GGUF / llama.cpp models locally, and with Ollama and OpenAI models remotely.

The main goal of llama.cpp is to enable LLM inference with minimal setup and state-of-the-art performance on a wide range of hardware - locally and in the cloud.

The Swiss Army Knife of offline AI. Chat, speak, and generate images. Privacy first, zero internet. Download an LLM and use it on your mobile device. No data ever leaves your phone.

With Neural Pixel, you can use Stable Diffusion on practically any GPU that supports Vulkan and has at least 3GB of VRAM. This is a simple way to generate your images without having to deal with CUDA/ROCm installations or hundreds of Python dependencies.

LLM Hub is an open-source Android app for on-device LLM chat and image generation. It's optimized for mobile usage (CPU/GPU/NPU acceleration) and supports multiple model formats so you can run powerful models locally and privately.

Cortex is the open-source brain for robots: vision, speech, language, tabular, and action -- the cloud is optional.

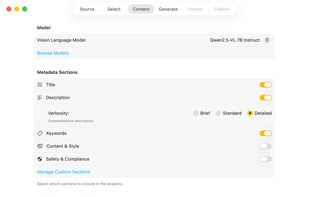

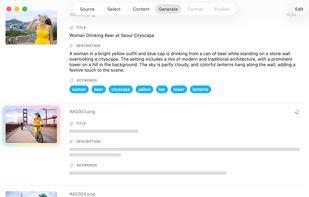

VisionTagger is a privacy-first macOS app that generates structured photo metadata (titles, captions, keywords) using local vision models, without uploading images or metadata to the cloud.

Run AI models locally on your machine with node.js bindings for llama.cpp. Enforce a JSON schema on the model output on the generation level.