Whisper

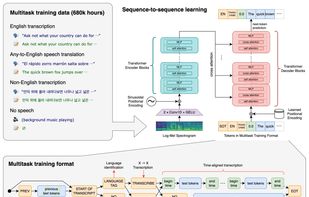

An open-source, end-to-end speech recognition system trained on 680,000 hours of diverse audio, providing multilingual transcription, to-English translation, language identification, phrase-level timestamps, and high performance in real-world scenarios using transformer architecture.

Features

- Speech to text

- Speech Recognition

- Ad-free

- Speech Transcription

- AI-Powered

Whisper News & Activities

Recent News

- Maoholguin published news article about Whisper

OpenAI unveils advanced Speech-to-Text and Text-to-Speech models in API update

OpenAI unveils advanced Speech-to-Text and Text-to-Speech models in API updateOpenAI has introduced new speech-to-text and text-to-speech models in its API, enhancing voice agen...

- Maoholguin published news article about Spotify

Spotify and OpenAI launch AI-Powered Voice Translation for Podcasters

Spotify and OpenAI launch AI-Powered Voice Translation for PodcastersSpotify, in collaboration with OpenAI, has launched an AI-powered voice translation feature for pod...

- POX published news article about ChatGPT

Open AI announces general availability of GPT-4, GPT-3.5 Turbo and DALL-E APIs

Open AI announces general availability of GPT-4, GPT-3.5 Turbo and DALL-E APIsOpenAI announced yesterday in a blog post that all paying API customers now have access to GPT-4. T...

Recent activities

shsunmoon added Whisper as alternative to Transcribe.so

shsunmoon added Whisper as alternative to Transcribe.so POX added Whisper as alternative to Google AI Edge Eloquent and Ghost Pepper

POX added Whisper as alternative to Google AI Edge Eloquent and Ghost Pepper POX added Whisper as alternative to Silkwave Voice

POX added Whisper as alternative to Silkwave Voice- POX added Whisper as alternative to TypeWhisper

srjson added Whisper as alternative to Aurora Subtitles

srjson added Whisper as alternative to Aurora Subtitles Toolab added Whisper as alternative to Glasscribe

Toolab added Whisper as alternative to Glasscribe

What is Whisper?

Whisper is a general-purpose speech recognition model used for various applications. It's trained on a large, diverse audio dataset, enabling it to handle tasks like multilingual speech recognition, speech translation, and language identification.

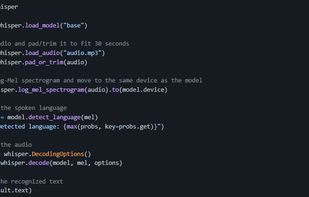

Whisper is an automatic speech recognition (ASR) system, using a training dataset of 680,000 hours of multitask, multilingual data from the internet. This dataset helps it handle accents, background noise, and technical language. It also supports transcription in multiple languages and translation into English. Its models and inference code are open-source, aiding application development and further research.

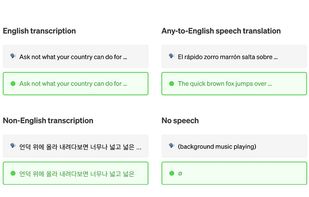

Whisper uses an end-to-end approach through an encoder-decoder Transformer. It processes 30-second audio segments into a log-Mel spectrogram, which is fed into an encoder. A decoder predicts the corresponding text caption and special tokens for tasks like language identification and translation into English. About a third of Whisper’s audio dataset is non-English, and it alternates between transcribing in the original language or translating to English. This method has outperformed the supervised state-of-the-art on CoVoST2 to English translation in a zero-shot scenario.

Comments and Reviews

works well not very hard to use when we are a developper. but good models need good GPU

I don't speak English and this software helps me get any movie or video subtitles in my native language. Amazing!