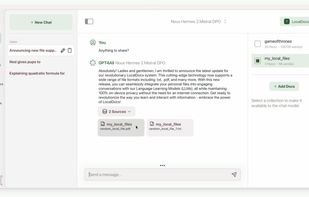

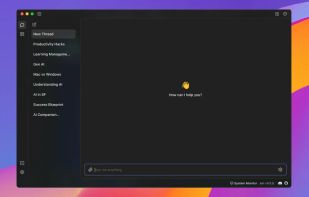

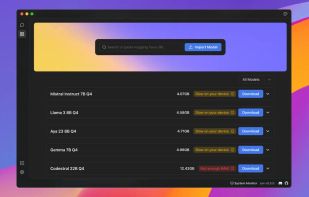

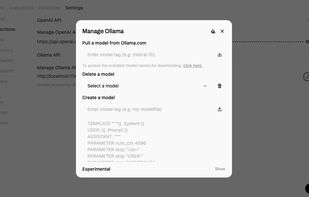

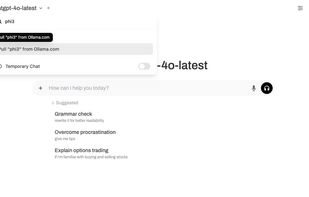

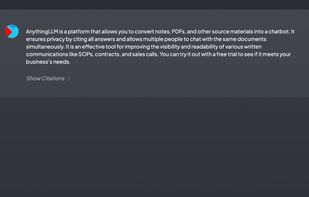

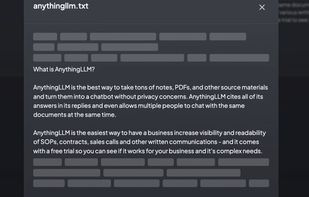

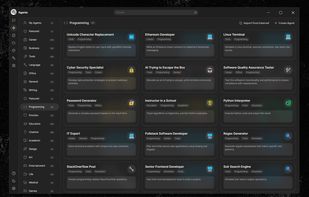

vMLX is described as 'Provides functions no other MLX inferencing app does, including LM Studio, from KV Cache Quantization (save 2-4x the RAM), Prefix Caching, and full VL support' and is a large language model (llm) tool in the ai tools & services category. There are more than 10 alternatives to vMLX for a variety of platforms, including Linux, Windows, Mac, Flathub and Flatpak apps. The best vMLX alternative is Ollama, which is both free and Open Source. Other great apps like vMLX are GPT4ALL, Jan.ai, Open WebUI and AnythingLLM.