stenoAI

Like

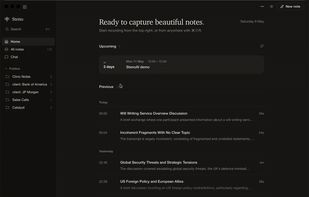

Steno is the AI powered intelligence layer for all your confidential conversations. Capture beautiful notes whilst keeping your data confidential. Perfect for government, defence, legal and CXOs.

Cost / License

- Free

- Open Source (MIT)

Platforms

- Mac

Features

Properties

- Privacy focused

Features

- Ad-free

- Support for MarkDown

- No Tracking

- Works Offline

- No registration required

- Dark Mode

- AI-Powered

- Meeting notes

- AI Summarization

- Audio Recording

- Built-in Note Taker

- Agentic AI

stenoAI News & Activities

Highlights All activities

Recent activities

POX added stenoAI as alternative to BB Recorder, Granola, Offscript and Meeting Mind

POX added stenoAI as alternative to BB Recorder, Granola, Offscript and Meeting Mind- POX updated stenoAI

- POX added stenoAI

stenoAI information

No comments or reviews, maybe you want to be first?

What is stenoAI?

Steno is the AI powered intelligence layer for all your confidential conversations, your private data never leaves anywhere. Record, transcribe, summarize, and query your meetings using local AI models. Perfect for government, defence, legal and C-suite professionals with confidential data needs.

Features:

- Privacy-first — 100% on-device; your recordings, transcripts, and summaries never leave your Mac

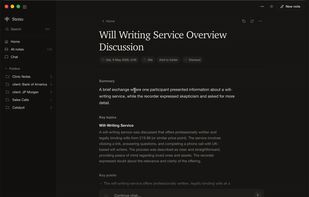

- In-app note-taking — Jot notes while you record; they're folded straight into the AI summary

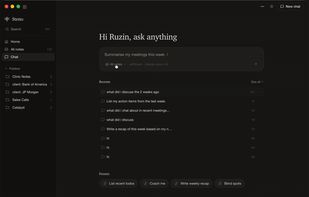

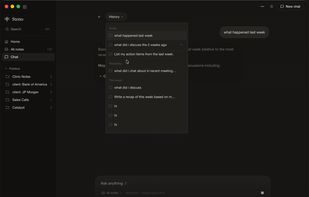

- Ask your meetings — Natural-language Q&A across a single note or your entire library via the Chat tab (summary, key topics, full transcript)

- System audio capture — Record both sides of virtual meetings, headphones on, no extra setup. Native Core Audio Tap on macOS 14.4+ with automatic fallback on older versions

- Speaker diarisation — [You] vs [Others] labels on system-audio recordings

- Multi-language — Auto-detect and transcribe in 99 languages

- Markdown notes — Summaries and transcripts saved as clean Markdown you can edit, search, or sync

- Remote Ollama server — Offload summarisation to a beefier Mac or workstation on your network

- Bring your own cloud model — Optional OpenAI, Anthropic, or custom API endpoint for users who prefer a hosted LLM

- Under the hood — Local transcription via whisper.cpp, summarisation via bundled Ollama (5 models to choose from)