SmolChat

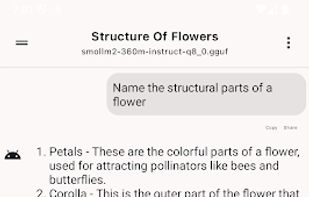

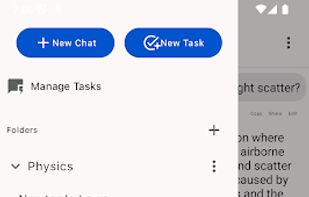

SmolChat allows you to download and run popular LLMs on your Android device, locally, without needing an internet connection. Customize the model used for each chat, tune settings like temperature and min-p, and pin your favourite chats on the home-screen with shortcuts.

Features

Properties

- Privacy focused

- Distraction-free

Features

- No registration required

- No Tracking

SmolChat News & Activities

Recent activities

- niksavc liked SmolChat

- justarandom added SmolChat

- justarandom added SmolChat as alternative to ChatGPT, Lumo by Proton, Mistral Le Chat and Google Gemini

SmolChat information

What is SmolChat?

SmolChat allows you to download and run popular LLMs on your Android device, locally, without needing an internet connection. Customize the model used for each chat, tune settings like temperature and min-p, and pin your favourite chats on the home-screen with shortcuts.

Project Goals

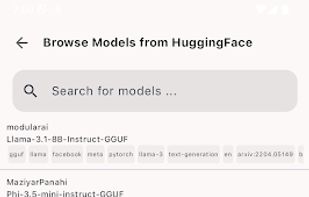

Provide a usable user interface to interact with local SLMs (small language models) locally, on-device Allow users to add/remove SLMs (GGUF models) and modify their system prompts or inference parameters (temperature, min-p) Allow users to create specific-downstream tasks quickly and use SLMs to generate responses Simple, easy to understand, extensible codebase

Setup

Clone the repository with its submodule originating from llama.cpp,

git clone --depth=1 https://github.com/shubham0204/SmolChat-Android cd SmolChat-Android git submodule update --init --recursive

Android Studio starts building the project automatically. If not, select Build > Rebuild Project to start a project build.

After a successful project build, connect an Android device to your system. Once connected, the name of the device must be visible in top menu-bar in Android Studio.

Working

The application uses llama.cpp to load and execute GGUF models. As llama.cpp is written in pure C/C++, it is easy to compile on Android-based targets using the NDK.

The smollm module uses a llm_inference.cpp class which interacts with llama.cpp's C-style API to execute the GGUF model and a JNI binding smollm.cpp. Check the C++ source files here. On the Kotlin side, the SmolLM class provides the required methods to interact with the JNI (C++ side) bindings.

The app module contains the application logic and UI code. Whenever a new chat is opened, the app instantiates the SmolLM class and provides it the model file-path which is stored by the LLMModel entity. Next, the app adds messages with role user and system to the chat by retrieving them from the database and using LLMInference::addChatMessage.

For tasks, the messages are not persisted, and we inform to LLMInference by passing _storeChats=false to LLMInference::loadModel.

Technologies

ggerganov/llama.cpp is a pure C/C++ framework to execute machine learning models on multiple execution backends. It provides a primitive C-style API to interact with LLMs converted to the GGUF format native to ggml/llama.cpp. The app uses JNI bindings to interact with a small class smollm. cpp which uses llama.cpp to load and execute GGUF models.

noties/Markwon is a markdown rendering library for Android. The app uses Markwon and Prism4j (for code syntax highlighting) to render Markdown responses from the SLMs.