Work at light speed. Get AI-powered completions instantly anywhere on your Mac. From emails to documents, forms to CRM — everything just works faster.

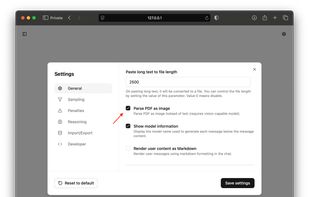

Operit AI is described as '📱 The first fully functional, standalone AI assistant for mobile devices with powerful tool-calling capabilities 📱' and is an app in the ai tools & services category. There are more than 50 alternatives to Operit AI for a variety of platforms, including Web-based, Android, Windows, Mac and iPhone apps. The best Operit AI alternative is ChatGPT, which is free. Other great apps like Operit AI are Lumo by Proton, DeepSeek, Mistral Le Chat and Ollama.

Work at light speed. Get AI-powered completions instantly anywhere on your Mac. From emails to documents, forms to CRM — everything just works faster.

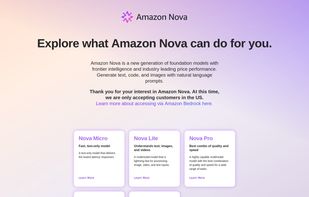

Amazon Nova is a new generation of foundation models with frontier intelligence and industry leading price performance. Generate text, code, and images with natural language prompts.

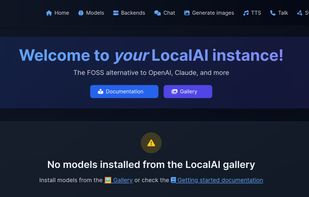

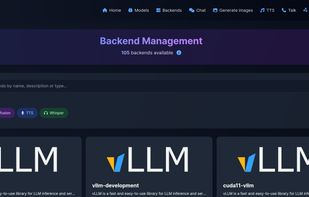

Drop-In OpenAI replacement, On-device, local-first, Generate text/image/speech/music/etc... Backend Agnostic: (llama.cpp, diffusers, bark.cpp, etc...), Optional Distributed Inference(P2P/Federated).

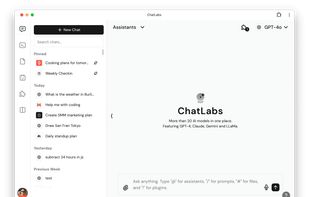

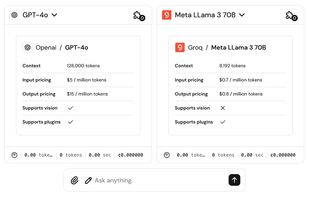

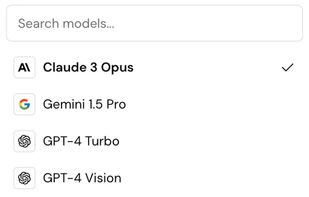

ChatLabs is a comprehensive AI platform that provides access to over 30 leading AI models in one place, including GPT-4, Claude, Gemini, Llama 3, DALL-E 3, and Stable Diffusion.

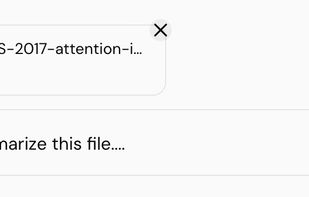

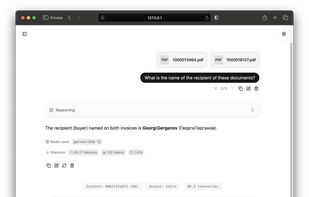

PandaChat.ai serves as your research assistant, helping you simplify complex topics, summarise content and get answers immediately. Whether you're reading a lengthy article, blog post, wikipedia or research paper, Byte 🤖 will respond to your query about any file...

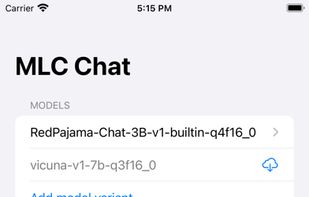

MLC LLM is a machine learning compiler and high-performance deployment engine for large language models. The mission of this project is to enable everyone to develop, optimize, and deploy AI models natively on everyone’s platforms.

The Swiss Army Knife of offline AI. Chat, speak, and generate images. Privacy first, zero internet. Download an LLM and use it on your mobile device. No data ever leaves your phone.

The main goal of llama.cpp is to enable LLM inference with minimal setup and state-of-the-art performance on a wide range of hardware - locally and in the cloud.

Run LLMs on AMD Ryzen™ AI NPUs in minutes. Just like Ollama - but purpose-built and deeply optimized for the AMD NPUs.

Experience the power of RWKV models directly on your device. Completely offline, privacy-first, and efficient. No internet required.

AI00 RWKV Server is an inference API server for the RWKV language model based upon the web-rwkv inference engine.