Cost / License

- Free

- Open Source (AGPL-3.0)

Platforms

- Self-Hosted

Ollama is described as 'Facilitates local deployment of Llama 3, Code Llama, and other language models, enabling customization and offline AI development. Perfect for creating personalized AI chatbots and writing tools' and is a very popular large language model (llm) tool in the ai tools & services category. There are more than 50 alternatives to Ollama for a variety of platforms, including Windows, Mac, Linux, Android and Web-based apps. The best Ollama alternative is DeepSeek, which is both free and Open Source. Other great apps like Ollama are Jan.ai, AnythingLLM, Alpaca - Ollama Client and Ensu.

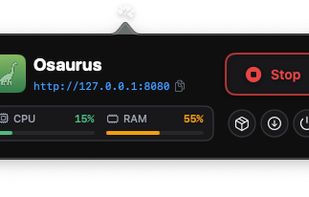

AI edge infrastructure for macOS. Run local or cloud models, share tools across apps via MCP, and power AI workflows with a native, always-on runtime.

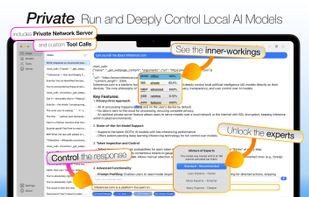

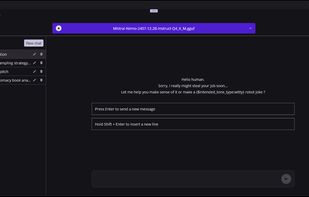

Inferencer lets you run, host and deeply control the latest SOTA AI models (OSS, DeepSeek, Qwen, Kimi, GLM, MiniMax and more) from your own computer.

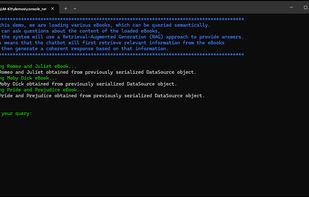

LM-Kit.NET is a versatile SDK for integrating Large Language Models (LLM) into C# applications. It provides advanced AI features like text generation, Natural Language Processing (NLP), data retrieval, content enhancement, and translation, enabling diverse industry use cases with ease.

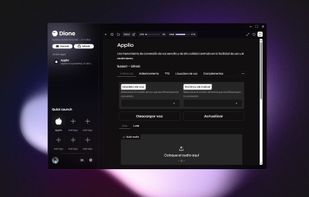

Dione makes installing complex applications as simple as clicking a button — no terminal or technical knowledge needed. For developers, Dione offers a zero-friction way to distribute apps using just a JSON file. App installation has never been this effortless.

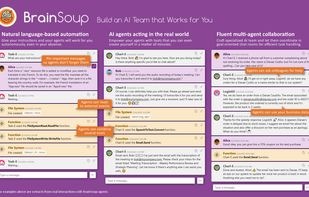

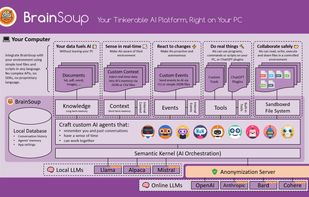

BrainSoup is an ingenious, modular AI platform that empowers you to build autonomous assistants for any task, all while ensuring your data remains private on your local device. Explore BrainSoup's endless possibilities and join the community.

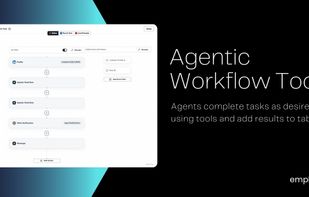

Automate Unresolved Go-to-Market Challenges and Empower Your CRM, Marketing, and Sales Teams to Achieve Desired Results with Multi-agent Framework, B2B Database Creation, Agentic Workflows, and Integrations.

Shinkai is a two click install App that allows you to create Local AI agents in 5 minutes or less using a simple UI. Supports: MCPs, Remote and Local AI, Crypto and Payments.

Run frontier LLMs and VLMs with day-0 model support across GPU, NPU, and CPU, with comprehensive runtime coverage for PC (Python/C++), mobile (Android & iOS), and Linux/IoT (Arm64 & x86 Docker). Supporting OpenAI GPT-OSS, IBM Granite-4, Qwen-3-VL, Gemma-3n, Ministral-3, and more.

Council is an open-source platform for rapidly developing customized generative AI applications using collaborating ‘agents’.

Create autonomous agents: Each agent has a unique personality and can accumulate its own memories