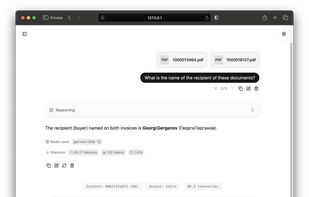

LLM Hub is an open-source Android app for on-device LLM chat and image generation. It's optimized for mobile usage (CPU/GPU/NPU acceleration) and supports multiple model formats so you can run powerful models locally and privately.

Cost / License

- Free

- Open Source

Application types

Platforms

- Android

- Android Tablet