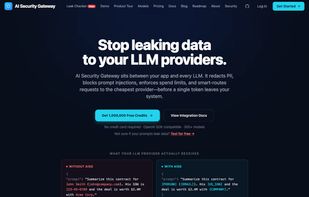

AI Security Gateway

Open-source AI firewall and LLM proxy that redacts PII, blocks prompt injection, and enforces spend budgets before requests reach any AI provider. Apache 2.0, self-hostable.

Cost / License

- Freemium (Pay once or Subscription)

- Open Source (Apache-2.0)

Platforms

- Online

- Self-Hosted

- Software as a Service (SaaS)

- Docker

Features

Tags

- prompt-injection-defense

- self-hosted-ai

- llm-security

- llm-proxy

- pii-redaction

- prompt-injection-llm-security

- ai-governance

- ai-security

- openai-compatible-api

- openai-compatible-proxy-server

- data-loss-prevention

- self-hosted-apps

- prompt-injection-sanitization

- ai-proxy

- pii-detection

- prompt-injection-detection

- ai-proxy-service

- openai-compatible

- prompt-injection

- dlp

- pii-anonymization

- prompt-injection-prevention

AI Security Gateway News & Activities

Recent activities

- binugeorgep updated AI Security Gateway

- binugeorgep added Python SDK as a feature to AI Security Gateway

- binugeorgep liked AI Security Gateway

luckyPipewrench added AI Security Gateway as alternative to Pipelock

luckyPipewrench added AI Security Gateway as alternative to Pipelock- Maoholguin updated AI Security Gateway

binugeorgep added AI Security Gateway as alternative to OpenRouter

binugeorgep added AI Security Gateway as alternative to OpenRouter- binugeorgep added AI Security Gateway

binugeorgep added AI Security Gateway as alternative to Helicone

binugeorgep added AI Security Gateway as alternative to Helicone

AI Security Gateway information

What is AI Security Gateway?

AI Security Gateway (AISG) is a vendor-neutral governance layer that sits between your application and any LLM provider (OpenAI, Anthropic, Google, Groq, Mistral, xAI, Together.ai, DeepInfra). It scans every request for sensitive data — emails, SSNs, credit cards, API keys, and 28+ entity types — and redacts or blocks them before anything reaches the model.

Fully stateless — prompts pass through and are never stored, logged, or used for training. Only metadata (cost, latency, entity counts) is recorded. Drop-in compatible with the OpenAI SDK (change two lines of code). Smart cost routing automatically picks the cheapest provider for each request. Hard budget enforcement prevents runaway agent costs. Prompt injection and jailbreak blocking included.

Works as a drop-in OpenAI SDK replacement — change your base URL, get instant security and cost control with no other code changes.

Available as a managed cloud (1M free credits, no credit card) or fully self-hosted via Docker under Apache 2.0. Zero telemetry, fail-closed by default — if the security layer is unreachable, requests are blocked, never forwarded unscanned.

Key capabilities:

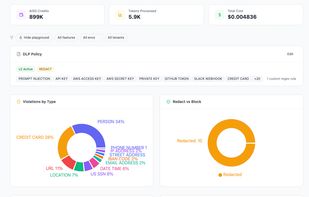

- PII redaction across text and images (OCR-based)

- Prompt injection and jailbreak blocking

- Recursive loop protection — kills agent retry loops at the gateway layer

- HMAC-signed webhook alerts — real-time push to Slack, PagerDuty, or any SIEM

- EU AI Act Article 12 compliance logging — hash-chained tamper-evident audit trails

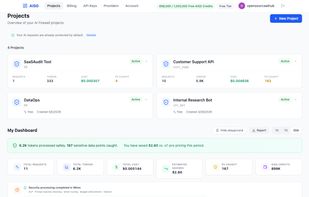

- Per-project spend quotas and token caps

- Smart routing across 380+ models — auto-picks cheapest provider

- Zero data retention — prompts processed in-memory, never stored

- Full self-hosted option via Docker/Kubernetes (Apache 2.0)

- BYOK — no markup on your provider API costs

Built for developers shipping LLM apps, teams handling sensitive data in regulated industries (healthcare, finance, legal), and anyone who needs AI security without vendor lock-in.