Llama Guard is an LLM-based input-output safeguard model geared towards Human-AI conversation use cases.

WildGuard is an open, lightweight moderation tool for LLM safety that achieves three goals:

Cost / License

- Free

- Open Source

Application type

Platforms

- Self-Hosted

- Python

Encrypted - No logging DNS. A carefully selected collection of no-logging DNS providers that implement DNS over HTTPS or TLS or both.

+2

+2

ShieldGemma is a set of instruction tuned models for evaluating the safety of text and images against a set of defined safety policies. You can use this model as part of a larger implementation of a generative AI application to help evaluate and prevent generative AI...

Cost / License

- Free

- Proprietary

Application type

Platforms

- Self-Hosted

- Google Cloud Platform

A text classification model that can be used as a guardrail to protect against toxic prompts and responses in conversational AI systems.

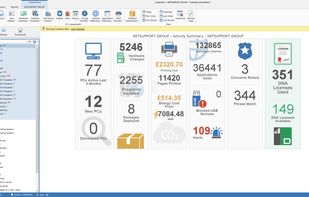

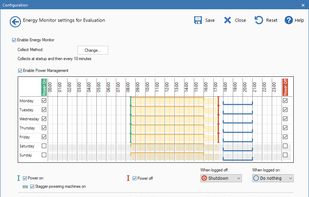

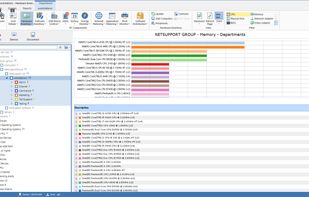

A suite of easy-to-use tools for managing and supporting IT assets across organisations – helping to save time and money as well as adding security.

+2

+2