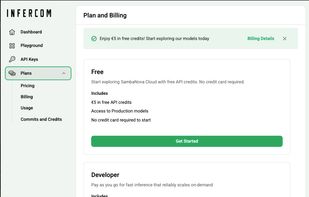

deAPI is an OpenAI-compatible inference API for open-source models — image, video, audio, embeddings, OCR. Decentralized GPUs. $5 free, no card.

Cost / License

- Paid

- Proprietary

Platforms

- Online

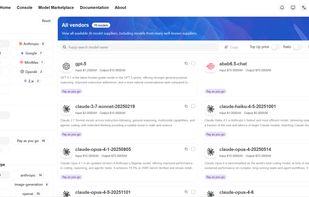

Together AI is described as 'Run and fine-tune generative AI models with easy-to-use APIs and highly scalable infrastructure. Train and deploy models at scale on our AI Acceleration Cloud and scalable GPU clusters. Optimize performance and cost' and is an app in the ai tools & services category. There are more than 25 alternatives to Together AI for a variety of platforms, including Web-based, Windows, Linux, Mac and SaaS apps. The best Together AI alternative is deAPI. It's not free, so if you're looking for a free alternative, you could try Unsloth or Minimax Platform. Other great apps like Together AI are Mistral Forge, Fireworks AI, Plexe AI and AIkit.

deAPI is an OpenAI-compatible inference API for open-source models — image, video, audio, embeddings, OCR. Decentralized GPUs. $5 free, no card.

Transform institutional knowledge into frontier-grade LLMs—without infrastructure burden or cloud lock-in.

Harness state-of-the-art open-source LLMs and image models at blazing speeds with Fireworks AI. Utilize rapid deployment, fine-tuning without extra costs, FireAttention for model efficiency, and FireFunction for complex AI applications including automation and domain-expert copilots.

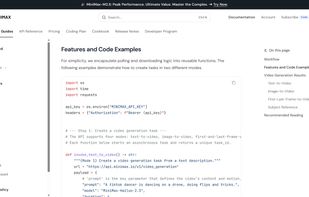

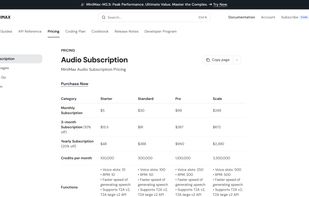

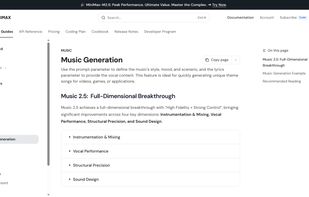

MiniMax Platform is a versatile AI ecosystem offering advanced models for text, speech, video, and music generation, optimized for coding, creative expression, and immersive interaction.

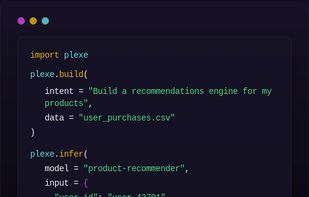

Plexe AI enables you to create, train, and deploy machine learning models using simple English commands — no coding required.

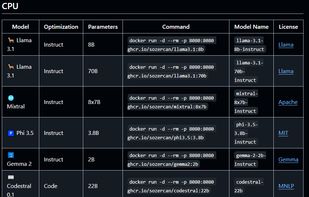

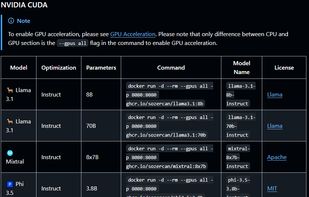

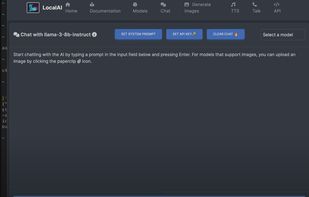

AIKit is a comprehensive platform to quickly get started to host, deploy, build and fine-tune large language models (LLMs).

sllm provides access to a range of large language models at the cheapest possible price, in the most configurably relevant way, all securely and privately hosted on dedicated GPU infrastructure. No markup beyond cost. No tracking. Just the models you need.

EU sovereign Enterprise AI inference platform with OpenAI-compatible API for running open-source LLMs on dedicated infrastructure in Europe. Full GDPR, no US CLOUD act exposure.

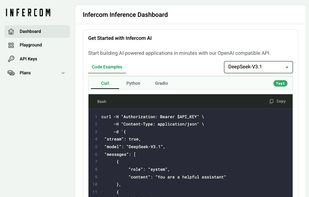

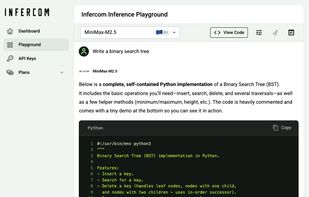

Abstract the complexity, focus on building great products. Fully compatible with OpenAI SDK - no new API to learn. From creative to production, AI capabilities at your fingertips.