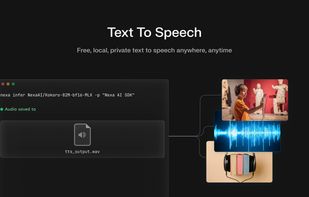

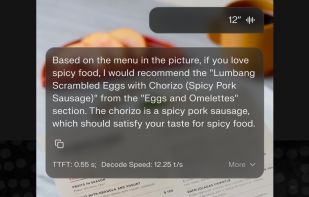

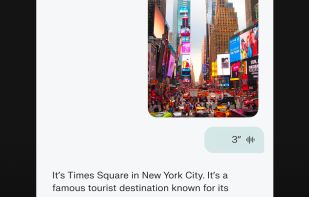

The Swiss Army Knife of offline AI. Chat, speak, and generate images. Privacy first, zero internet. Download an LLM and use it on your mobile device. No data ever leaves your phone.

Cost / License

- Free

- Open Source (MIT)

Platforms

- Android

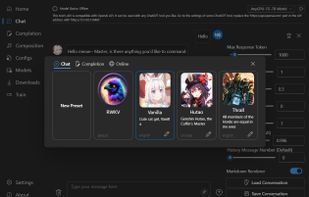

RWKV Chat is described as 'Experience the power of RWKV models directly on your device. Completely offline, privacy-first, and efficient. No internet required' and is a large language model (llm) tool in the system & hardware category. There are more than 25 alternatives to RWKV Chat for a variety of platforms, including Windows, Linux, Mac, Android and Self-Hosted apps. The best RWKV Chat alternative is Ollama, which is both free and Open Source. Other great apps like RWKV Chat are Jan.ai, GPT4ALL, AnythingLLM and LM Studio.

The Swiss Army Knife of offline AI. Chat, speak, and generate images. Privacy first, zero internet. Download an LLM and use it on your mobile device. No data ever leaves your phone.

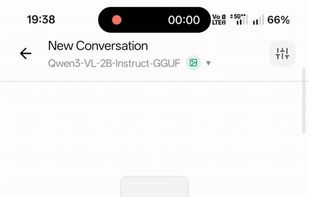

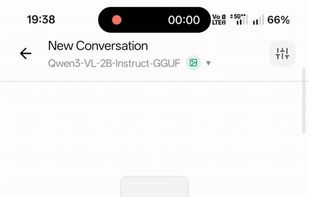

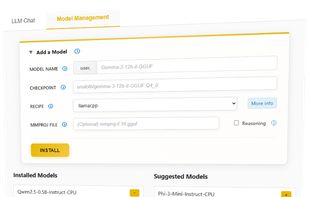

Run frontier LLMs and VLMs with day-0 model support across GPU, NPU, and CPU, with comprehensive runtime coverage for PC (Python/C++), mobile (Android & iOS), and Linux/IoT (Arm64 & x86 Docker). Supporting OpenAI GPT-OSS, IBM Granite-4, Qwen-3-VL, Gemma-3n, Ministral-3, and more.

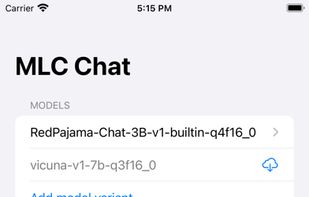

MLC LLM is a machine learning compiler and high-performance deployment engine for large language models. The mission of this project is to enable everyone to develop, optimize, and deploy AI models natively on everyone’s platforms.

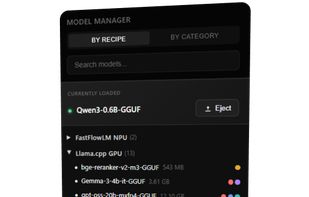

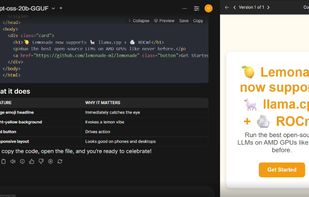

Run LLMs on AMD Ryzen™ AI NPUs in minutes. Just like Ollama - but purpose-built and deeply optimized for the AMD NPUs.

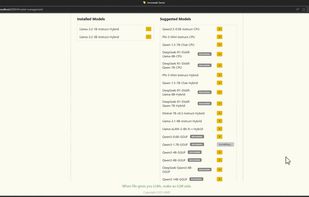

Lemonade helps users discover and run local AI apps by serving optimized LLMs right from their own GPUs and NPUs.

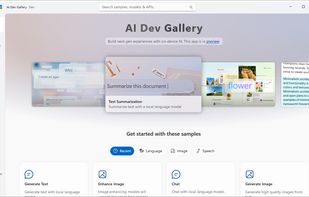

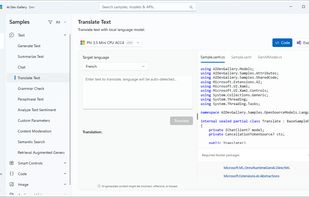

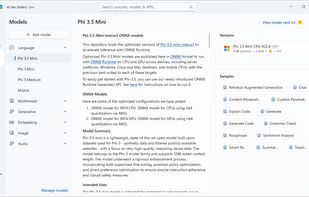

Learn how to add AI with local models and APIs to Windows apps. Discover AI scenarios and models such as Phi, Mistral, Stable Diffusion, Whisper, and many more to delight your users. The AI Dev Gallery is an open-source app designed to help Windows developers integrate AI...

AI00 RWKV Server is an inference API server for the RWKV language model based upon the web-rwkv inference engine.

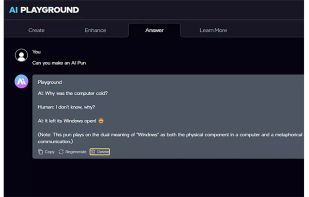

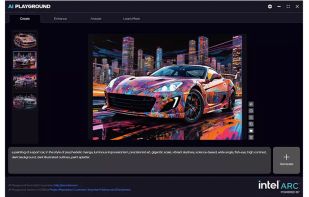

This application provides a full suite of generative AI features for chat, code assistance, document search, image analysis, image and video generation. All features run offline and are powered by your PC’s Intel® Core™ Ultra with built-in Intel Arc GPU or Intel Arc™ dGPU...

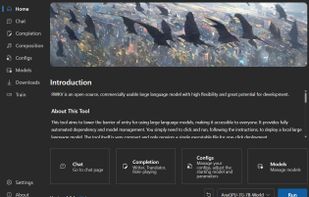

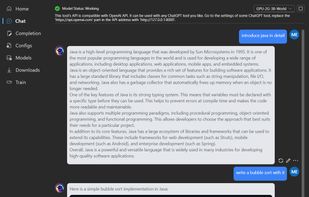

This project aims to eliminate the barriers of using large language models by automating everything for you. All you need is a lightweight executable program of just a few megabytes. Additionally, this project provides an interface compatible with the OpenAI API, which means...

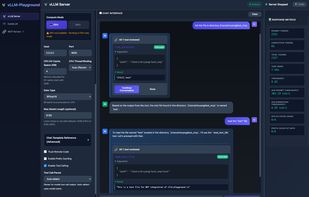

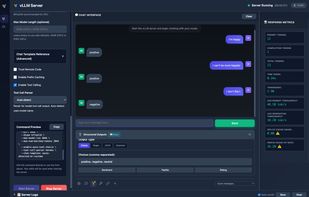

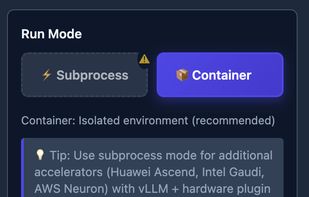

A modern web interface for managing and interacting with vLLM servers (www.github.com/vllm-project/vllm). Supports both GPU and CPU modes, with special optimizations for macOS Apple Silicon and enterprise deployment on OpenShift/Kubernetes.