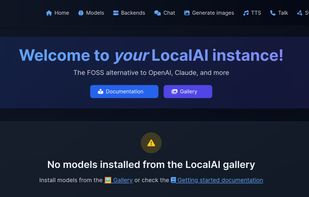

LocalAI

Drop-In OpenAI replacement, On-device, local-first, Generate text/image/speech/music/etc... Backend Agnostic: (llama.cpp, diffusers, bark.cpp, etc...), Optional Distributed Inference(P2P/Federated).

Features

Properties

- Privacy focused

Features

- Ad-free

- No Tracking

- Text to Image Generation

- Text to Speech

- Works Offline

- Dark Mode

- Image to Image Generation

- AI Writing

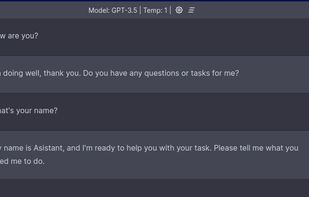

- AI Chatbot

- Speech to text

- Kubernetes

LocalAI News & Activities

Recent News

Recent activities

heggria added LocalAI as alternative to Hermit Agent

heggria added LocalAI as alternative to Hermit Agent architaaster added LocalAI as alternative to Ozus PC Assistant

architaaster added LocalAI as alternative to Ozus PC Assistant- teletonagent added LocalAI as alternative to Teleton Agent

- dorajlin88 added LocalAI as alternative to Anna AI Agent

LocalAI information

What is LocalAI ?

Drop-in replacement for OpenAI API, local/on-prem inference with consumer grade hardware, supporting multiple model families and backends that are compatible with standard formats like GGUF.

In a nutshell:

-

Local, OpenAI drop-in alternative REST API. You own your data.

-

NO GPU Required & Local/On-Device Inference (Offline).

-

Optional, GPU/NPU Acceleration is available in llama.cpp-compatible LLMs. See also the build section.

-

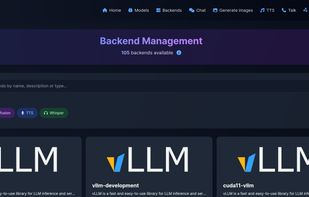

Model Inference Pipeline/Backend Agnostic! (install inference backends through Gallery WebUI or via the CLI)

-

Task Type's Supported:

-

Text generation (with llama.cpp, transformers, vllm, exllama2, gpt4all.cpp... and more)

-

Text to Audio:

-

Sound/Music generation (transformers-musicgen)

-

Speech generation (whisper, bark, piper, bark.cpp)

-

Speech to Text (i.e: transcription, with whisper.cpp, etc...)

-

Image generation with diffusers/stable-diffusion.cpp (text-to-image, image-to-image, etc...)

-

Text Embedding (with sentencetransformers, transformers)

-

Text Re-Ranking (rerankers, sentencetransformers)

-

Once loaded the first time, it keep models loaded in memory for faster inference

-

Distributed Inference (Federated and P2P mode)

Additional Notes:

- Performance/Throughput can vary by inference pipeline chosen, you can use C/C++ based pipelines like llama.cpp for a faster inference and better performance read the LocalAI docs for the most up-to-date information.

Comments and Reviews

looks promising. has nice webpage. uses 1000% of your CPU just launching or downloading gpt models. unable to download large models because for some reason not using any AI but downloading the model requires full load aswell.

all I am pointing out: this feels really beta and is not working well. gpt4all was much better.