FLAP AI

Fine-tune any LLM on your local GPU — no cloud, no bills, no data leaving your machine.

Cost / License

- Pay once or Subscription

- Proprietary

Platforms

- Online

- Software as a Service (SaaS)

Features

Properties

- Privacy focused

- Distraction-free

- Lightweight

Features

- No Coding Required

- Cloud Sync

- Dark Mode

- No Tracking

- Works Offline

- Live Preview

- Syntax Highlighting

- Marketing Automation

- Support for MarkDown

- Spell Checking

- AI-Powered

FLAP AI News & Activities

Recent activities

FLAP AI information

What is FLAP AI?

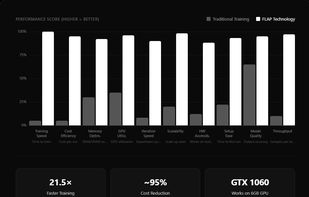

FLAP is a local LLM fine-tuning platform that runs entirely on your own GPU. Using memory-mapped parameter sharding, FLAP trains models from 1B to 670B+ parameters on as little as 6 GB VRAM — no cloud infrastructure required. Fully Automatic

Key advantages over cloud-based alternatives: • ~95% cost reduction vs AWS/GCP/Azure GPU instances • 21.5× faster training than traditional fine-tuning • Complete data privacy — nothing leaves your machine • Supports Llama, Mistral, Qwen, Gemma, and more • Free tier: 3 training jobs per month

FLAP is the alternative to Replicate, Modal, RunPod, and Lambda Labs for developers who want to fine-tune LLMs without paying per-GPU-hour or sending proprietary data to third-party servers.

Works on Windows, macOS, and Linux with any NVIDIA GPU (6 GB+ VRAM). Web dashboard included.