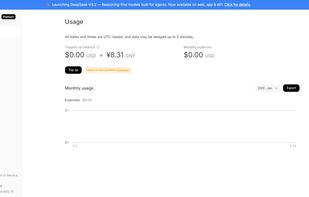

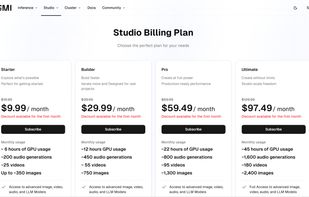

Seamlessly connect to multiple models through a single gateway with failproof routing, cost control, and instant usage insights.

Cost / License

- Paid

- Proprietary

Platforms

- Online

Seamlessly connect to multiple models through a single gateway with failproof routing, cost control, and instant usage insights.

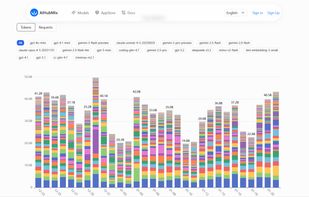

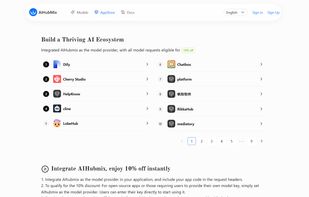

Welcome to AIHubMix, your reliable AI model API routing service. We use the OpenAI API as a standardized interface, capable of handling all models officially supported by OpenAI. Additionally, AIHubMix has incorporated other leading large language models on the market.

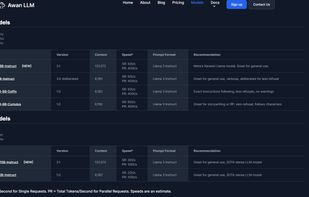

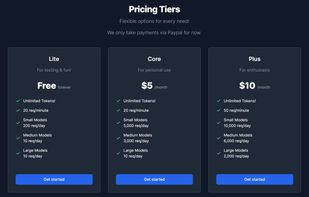

Awan LLM is a cost-effective large language model API provider offering flexible pricing tiers from free testing to scalable enterprise use.

Cerebras is an AI infrastructure company that builds wafer-scale processors delivering ultra-fast, cost-efficient training and inference for large models.

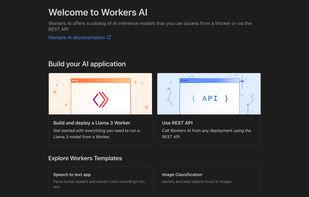

A serverless AI platform that runs models on Cloudflare's network, offering over 50 open-source models and a comprehensive suite for global application deployment.

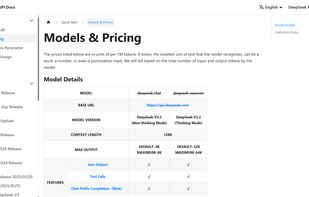

The DeepSeek API provides developers with direct access to DeepSeek’s advanced AI models, enabling them to run text, code, and multimodal tasks through simple endpoints for seamless integration into applications.

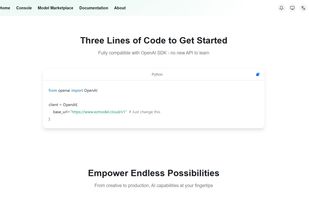

Abstract the complexity, focus on building great products. Fully compatible with OpenAI SDK - no new API to learn. From creative to production, AI capabilities at your fingertips.

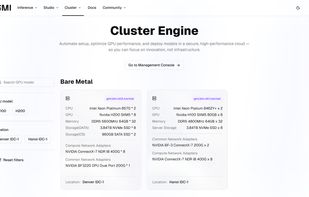

Build and deploy generative AI on the fastest and most efficient inference engine, fine-tuning and switching between models without extra costs.

You’re about to supercharge your AI models with lightning-fast inference. Inference Engine makes it easy to scale, and optimize your models for real-time performance. Let’s get started—your AI is ready to shine.

Google AI Studio is the fastest way to start building with Gemini, our next generation family of multimodal generative AI models.

Hugging Face is an online community dedicated to advancing AI and democratizing good machine learning. Hugging Face empowers the field of AI through various open-source developments, free and low-cost hosting of machine learning resources and by providing an accessible and...

Groq is a technology company offering GroqCloud, a high-performance inference platform designed to deliver ultra-fast, low-cost AI model execution.

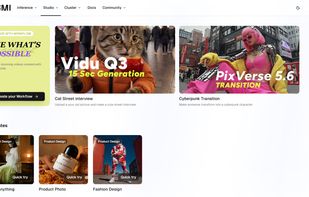

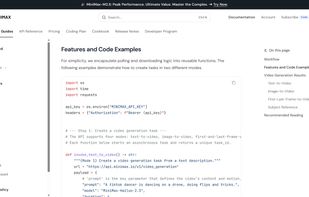

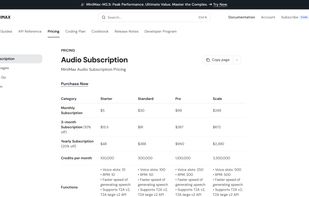

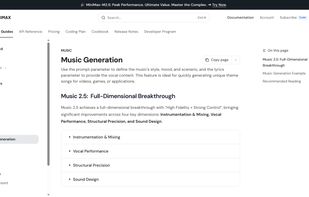

MiniMax Platform is a versatile AI ecosystem offering advanced models for text, speech, video, and music generation, optimized for coding, creative expression, and immersive interaction.

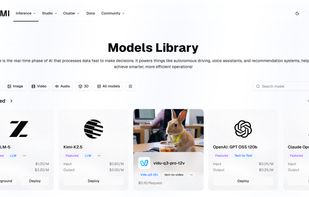

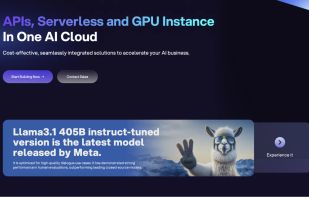

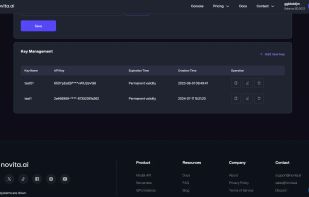

Access 200+ AI models with one API. Launch secure agent sandboxes and GPU instances in minutes. Built for developers, priced for startups.

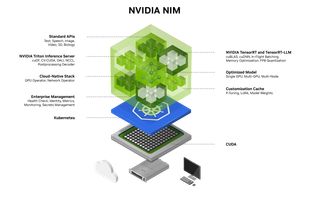

NVIDIA NIM is a set of accelerated inference microservices that allow organizations to run AI models on NVIDIA GPUs anywhere.

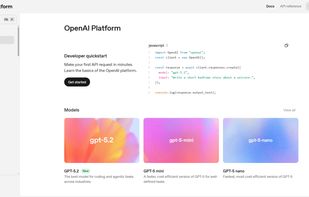

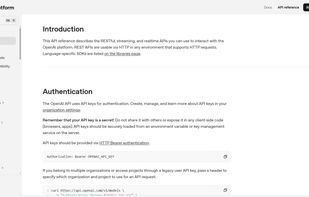

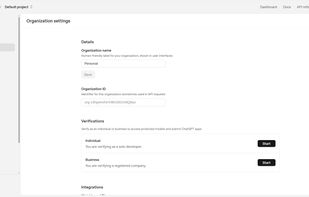

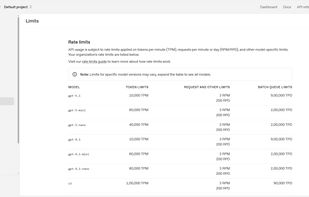

OpenAI Platform’s ChatGPT API lets you integrate GPT-5 series models to generate text, analyze and create images, work with audio, produce structured outputs, and build tool-using agents.

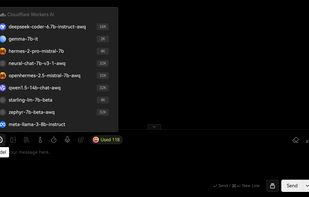

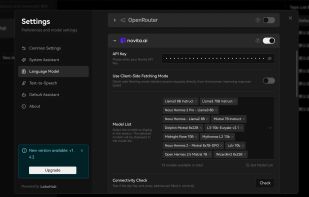

OpenRouter (openrouter.ai) is an AI model aggregator service that provides developers and users access to a wide range of large language models and generative 3D object models through a unified API. It allows users to interact with various AI models, including those from major...

Run and fine-tune generative AI models with easy-to-use APIs and highly scalable infrastructure. Train and deploy models at scale on our AI Acceleration Cloud and scalable GPU clusters. Optimize performance and cost.

Use cases for LLM API keys include but are not limited to - agentic coding , simple AI chat , UI/UX development , etc.