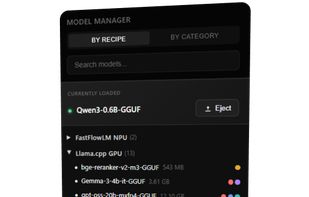

Run LLMs on AMD Ryzen™ AI NPUs in minutes. Just like Ollama - but purpose-built and deeply optimized for the AMD NPUs.

Cost / License

- Free Personal

- Open Source

Application types

Platforms

- Windows

- Online

- Self-Hosted

Run LLMs on AMD Ryzen™ AI NPUs in minutes. Just like Ollama - but purpose-built and deeply optimized for the AMD NPUs.

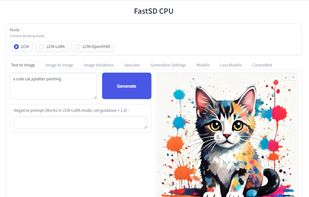

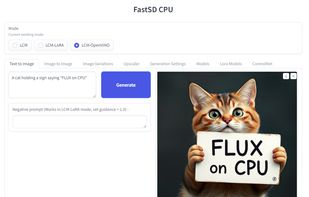

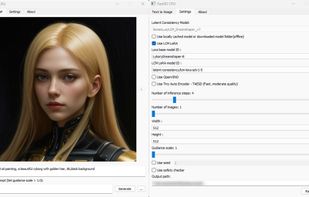

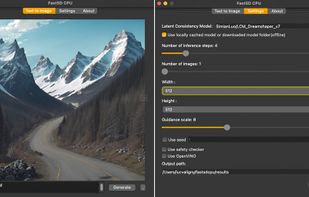

FastSD CPU is a faster version of Stable Diffusion on CPU. Based on Latent Consistency Models and Adversarial Diffusion Distillation.

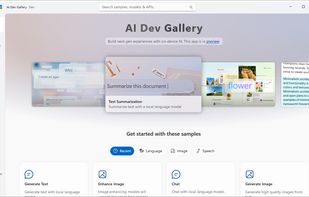

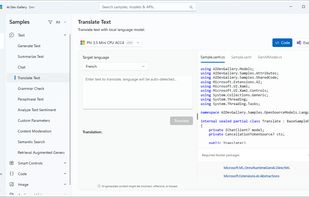

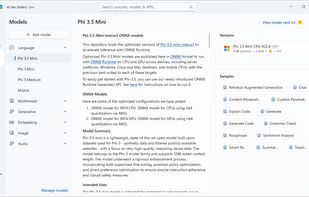

Learn how to add AI with local models and APIs to Windows apps. Discover AI scenarios and models such as Phi, Mistral, Stable Diffusion, Whisper, and many more to delight your users. The AI Dev Gallery is an open-source app designed to help Windows developers integrate AI...

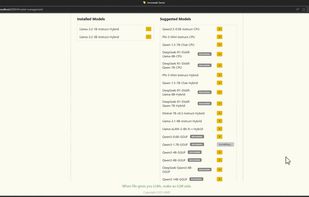

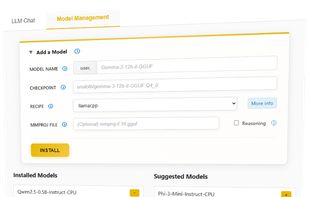

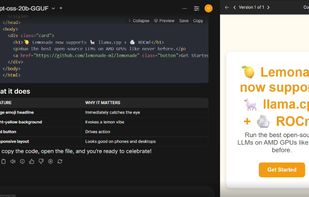

Lemonade helps users discover and run local AI apps by serving optimized LLMs right from their own GPUs and NPUs.

Experience the power of RWKV models directly on your device. Completely offline, privacy-first, and efficient. No internet required.

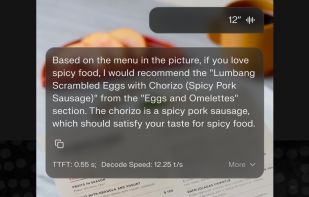

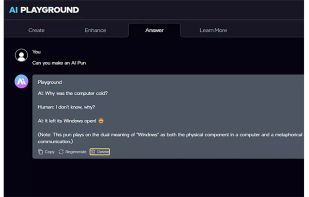

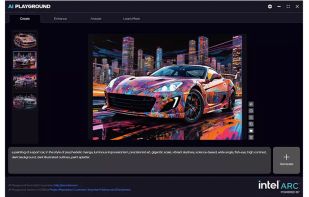

This application provides a full suite of generative AI features for chat, code assistance, document search, image analysis, image and video generation. All features run offline and are powered by your PC’s Intel® Core™ Ultra with built-in Intel Arc GPU or Intel Arc™ dGPU...

LLM Hub is an open-source Android app for on-device LLM chat and image generation. It's optimized for mobile usage (CPU/GPU/NPU acceleration) and supports multiple model formats so you can run powerful models locally and privately.