Public platform for evaluating large language models using anonymous pairwise comparisons, crowd-sourced voting, real-time result updates, and global performance tracking.

Public platform for evaluating large language models using anonymous pairwise comparisons, crowd-sourced voting, real-time result updates, and global performance tracking.

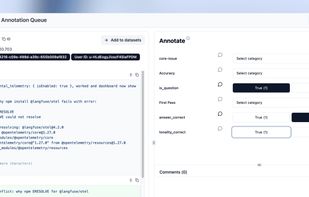

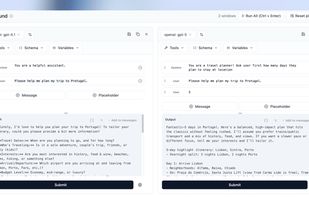

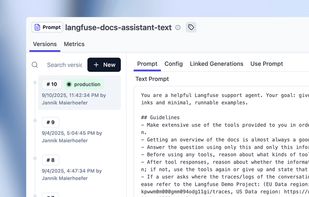

Open source LLM engineering platform: LLM Observability, metrics, evals, prompt management, playground, datasets. Integrates with OpenTelemetry, Langchain, OpenAI SDK, LiteLLM, and more.

Helicone is the open-source LLM observability platform for developers to monitor, debug, and improve production-ready applications.

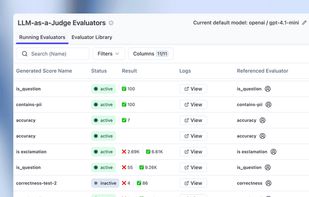

Test AI models yourself, privately, with a standardized benchmark, and get both technical scores AND practical recommendations.

Lisapet.ai is the next-level AI product development platform that empowers teams to prototype, test, and ship robust AI features 10x faster.