VoidLLM

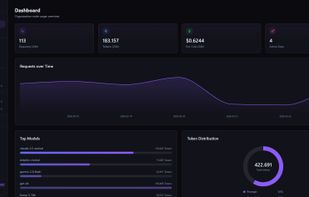

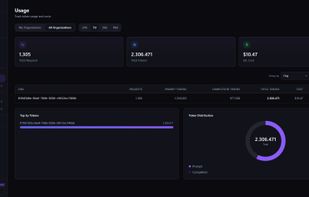

Privacy-first LLM proxy and AI gateway — load balancing, multi-provider routing, API key management, usage tracking, rate limiting. Self-hosted. Zero knowledge of your prompts.

Cost / License

- Free

- Open Source

Platforms

- Windows

- Mac

- Linux

- Self-Hosted

- Docker

Features

- Golang

- Kubernetes

Support for Docker

VoidLLM News & Activities

Recent activities

- WarmBed added VoidLLM as alternative to BazaarLink

- niksavc liked VoidLLM

- christianromeni added VoidLLM

christianromeni added VoidLLM as alternative to liteLLM and OpenRouter

christianromeni added VoidLLM as alternative to liteLLM and OpenRouter

VoidLLM information

What is VoidLLM?

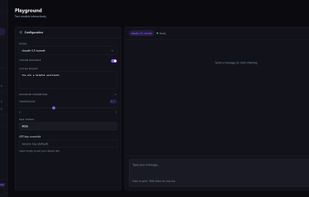

VoidLLM is an LLM gateway and proxy designed to give developers full control over how large language models are accessed, routed, and secured.

It acts as a unified entry point for multiple LLM providers (local or cloud), exposing a single, consistent API while handling routing, load balancing, and access control behind the scenes.

VoidLLM is built with a strong focus on privacy and transparency, it does not log prompts by default and allows complete control over data flow, making it suitable for sensitive or enterprise environments.

Key features include:

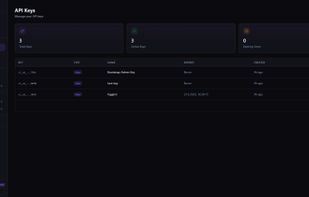

Unified API layer for multiple LLM providers (OpenAI-compatible, local models, etc.) MCP (Model Context Protocol) support for structured tool access Proxy & gateway architecture for routing, filtering, and managing LLM traffic RBAC and access control for teams and organizations Pluggable backends (local models, self-hosted, or cloud APIs) No vendor lock-in — bring your own models and providers Designed for self-hosting and full infrastructure control

VoidLLM is ideal for developers and teams who want to:

centralize LLM usage enforce security and compliance policies avoid exposing API keys directly maintain full ownership of their data and AI workflows