vMLX

vMLX provides functions no other MLX inferencing app does, including LM Studio, from KV Cache Quantization (save 2-4x the RAM), Prefix Caching, and full VL support.

vMLX News & Activities

Recent activities

vMLX information

What is vMLX?

I built vMLX because every local AI app on Mac left performance on the table. LM Studio, Ollama, and others use llama.cpp — a great project, but not optimized for Apple Silicon's unified memory architecture.

vMLX is a native macOS inference engine built on MLX with a 5-layer caching stack:

- Prefix caching — reuse computed KV entries across turns

- Paged multi-context KV cache — switch conversations without evicting (LM Studio evicts on switch, Ollama has no KV cache)

- KV cache quantization (q4/q8) — 2-4x cache memory savings

- Continuous batching — up to 256 concurrent sequences

- Persistent disk cache — survives app restarts

Benchmarks on M3 Ultra with Llama-3.2-3B-4bit at 100K context:

- Cold TTFT: 0.65s (vMLX) vs 131s (LM Studio) ? 224x faster

- Warm TTFT at 2.5K: 0.05s (9.7x faster)

It also has built-in agentic coding tools — 20+ MCP tools for file I/O, shell execution, browser automation, web search, and code editing. No other local AI app does this. Models can autonomously read, write, and edit your codebase.

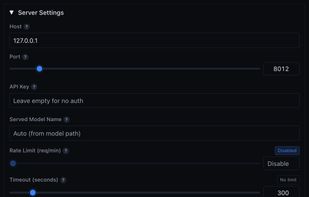

OpenAI-compatible API at localhost:8000 — works as a drop-in with Cursor, Continue, Aider. 7 API endpoints vs the usual 1.

Free, runs on any Apple Silicon Mac. No API keys, no cloud dependency.