Tenth Man

Most AI tools optimize for agreement. Tenth Man optimizes for dissent.

Three agents - Strategist, Skeptic, Synthesizer - run structured adversarial analysis on your decision.

The Skeptic attacks assumptions. The Synthesizer makes a call.

Cost / License

- Freemium (Subscription)

- Proprietary

Platforms

- Online

Features

Tenth Man information

What is Tenth Man?

Most AI tools optimize for agreement. Tenth Man optimizes for dissent.

Three agents - Strategist, Skeptic, Synthesizer - run structured adversarial analysis on your decision.

The Skeptic attacks assumptions. The Synthesizer makes a call.

Confidence is capped by what's unresolved, not by how good the answer sounds.

No chat. No "it depends." A real decision brief.

I built Tenth Man because I kept watching smart founders make bad decisions due to a lack of opposition.

Every advisor in the room agreed. Every AI tool they consulted found a way to validate the plan. And there was no structural mechanism to force anyone to assume the crowd was wrong.

The Tenth Man doctrine comes from military intelligence: if nine people agree, the tenth must disagree. The assumption is that consensus, by itself, is a blind spot.

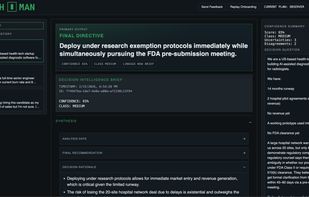

So I built a three-agent, three-model system where dissent is mandatory. A Strategist makes the case for action. A Skeptic attacks assumptions, incentives, and weak logic. A Synthesizer makes a call and owns the risk. Confidence is capped mechanically by what's unresolved - the system fails loudly if it tries to sound more certain than the evidence justifies.

It's not a chatbot. You don't ask it questions. You submit a decision, and it tells you what you're missing.

The decisions I had in mind: hiring a senior exec, raising or delaying a round, walking away from a deal, firing a co-founder. The ones where "it depends" is not an answer and the downside is real.