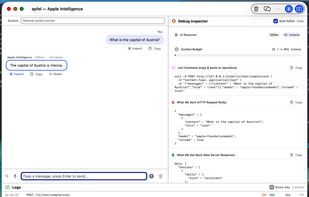

apfel

Access Apple’s local foundation model through a command-line tool with an OpenAI-compatible HTTP server, full function and schema calling, UNIX-friendly features, file attachments, JSON output, privacy-preserving on-device inference, and zero recurring costs.

Features

Properties

- Privacy focused

Features

- No registration required

- Works Offline

- No Tracking

- Command line interface

- Ad-free

- Apple Silicon support

- OpenAI integration

- Zero configuration

- AI-Powered

- AI Chatbot

- HTTP server

- Model Context Protocol (MCP) Support

apfel News & Activities

Recent activities

apfel information

What is apfel?

Use the free local Apple Intelligence LLM on your Mac — your model, your machine, your way.

No API keys. No cloud. No subscriptions. No per-token billing. The AI is already on your computer — apfel lets you use it.

What is this?

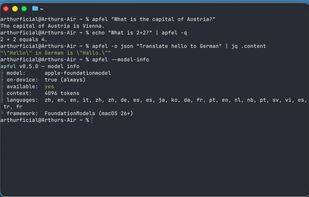

Every Mac with Apple Silicon has a built-in LLM — Apple's on-device foundation model, shipped as part of Apple Intelligence. Apple provides the FoundationModels framework (macOS 26+) to access it, but only exposes it through Siri and system features. apfel wraps it in a CLI and an HTTP server — so you can actually use it. All inference runs on-device, no network calls.

- UNIX tool — echo “summarize this” | apfel - pipe-friendly, file attachments, JSON output, exit codes

- OpenAI-compatible server — apfel --serve — drop-in replacement at localhost:11434, works with any OpenAI SDK

- Tool calling — function calling with schema conversion, full round-trip support

- Zero cost — no API keys, no cloud, no subscriptions, 4096-token context window