You can now use Google Stitch to vibe design UIs on an infinite canvas, using your voice

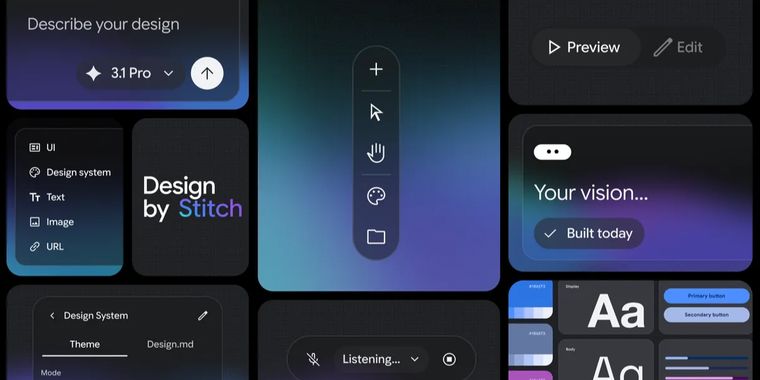

Google is transforming Stitch from an AI-powered design tool into a fully AI-native software design canvas. This evolution aims to let users create, iterate, and collaborate on user interface designs using natural language inputs. Stitch’s new infinite, AI-native canvas now supports workflows from initial ideation to functional prototypes, giving design teams room to explore concepts and evolve their projects fluidly.

Building on these changes, a design agent can reason across the context of the entire canvas, tracking the progression of work and enabling multi-directional exploration. With the addition of an Agent manager, users can oversee parallel idea development, keeping projects organized as they branch into new directions.

The design system toolkit has also been expanded. You can now extract design systems directly from any URL or use the new DESIGN.md file format for importing and exporting design rules between Stitch and other design or coding tools. Stitch further supports turning static screens into interactive prototypes instantly. By linking screens and clicking “Play”, teams can preview app flows and rely on Stitch to generate logical next screens for the user journey.

Following this, voice capabilities let designers interact hands-free, receive real-time feedback, and make updates through conversation. Finally, Stitch integrates with broader workflows using its MCP server and SDK, enabling export to tools like AI Studio and Antigravity while supporting third-party extensions.